My Take: The mainstream framing of the May 1 Pentagon contracts is that Anthropic lost the biggest single AI procurement of 2026. I think the opposite is true. Anthropic walked away with the one thing money cannot buy in this category: a public stake in not being the AI company you can use for anything. That position will outearn the lost contract revenue by a lot, and the Mythos paradox already proves the government cannot fully replace them anyway.

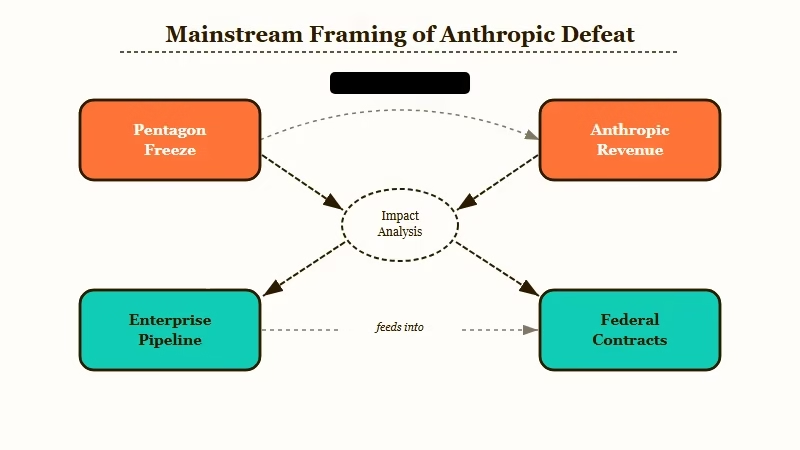

When the Pentagon announced its May 1 AI procurement deals with seven companies and Anthropic was the one frontier lab missing, the mainstream coverage treated it as a defeat.

CNN ran “Pentagon strikes deals with 7 Big Tech companies after shunning Anthropic.” Defense News went with “Pentagon freezes out Anthropic.” Bloomberg analysts pointed to lost revenue and a damaged enterprise pipeline.

I do not think any of that gets the strategic picture right. The way I see it, Anthropic just secured a position OpenAI and Google now cannot copy without contradicting their own contracts, and the value of that position is going to compound for years. This is the contrarian take.

The Mainstream View (And Why It Falls Short)

The mainstream view treats the Pentagon contracts as a clear loss for Anthropic, citing missed revenue, damaged enterprise positioning, and a chilling effect on adjacent contractors. The framing falls short because it treats federal contracts as the ceiling rather than one of several enterprise revenue streams.

The clearest version of the loss case came from Bloomberg’s coverage, which pointed to the gap between Anthropic’s reported $900 billion valuation and its now-blocked access to the federal AI budget as evidence of trouble ahead.

CNBC ran a similar framing focused on the supply chain risk designation as a market signal. CNN positioned Anthropic as “shunned.” Defense News went further with “freezes out.”

The argument those takes share is that federal contracts are an irreplaceable revenue tier and being cut out from them caps a company’s enterprise ceiling. The argument is wrong on two specific counts.

First, federal contracts are a meaningful but not dominant slice of frontier AI enterprise revenue. Most of the actual money in this category comes from Fortune 500 contracts, regulated-industry buyers (finance, healthcare, legal), and the developer API tier. Federal procurement is the highest-prestige slice, not the highest-revenue slice. Treating it as a ceiling is a category error.

Second, and more important, the loss-frame ignores what Anthropic just earned in exchange. A specific public refusal of “all lawful” use is not a procurement footnote. It is the most expensive marketing campaign in AI safety history, and the company did not pay a cent for it.

What’s Really Happening

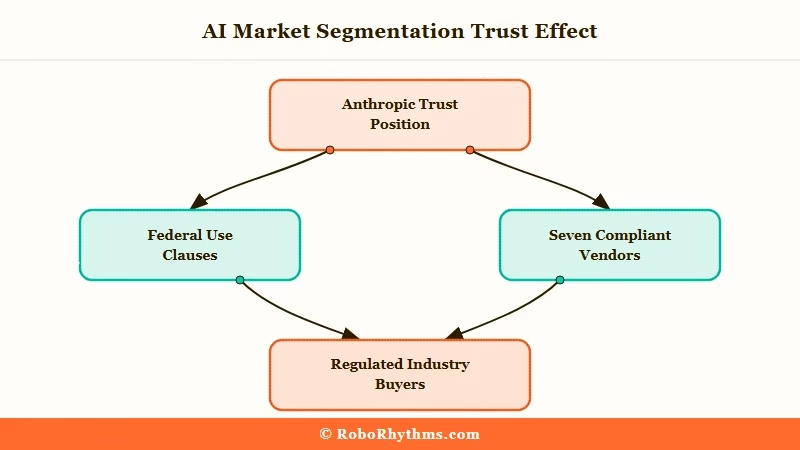

What is really happening is a public market segmentation: Anthropic took the position of the AI company you can trust not to be turned into an unaccountable surveillance tool, and the seven winners took the position of vendors that will accept whatever federal use clauses are required to win the contract.

Read the contracts the way an enterprise general counsel reads them. The Pentagon’s standard “all lawful” clause is the one Anthropic refused. The seven winners signed it. None of them have publicly clarified whether their stated AI safety guidelines have been quietly relaxed to fit, or whether they will manage the conflict case-by-case when a Pentagon program asks for something their public policy says they would refuse.

That ambiguity is now Anthropic’s competitive moat. Every regulated-industry buyer (banks worried about consumer-data surveillance, healthcare buyers worried about patient-data access, EU buyers worried about GDPR conflicts, journalism buyers worried about source protection) is going to read the May 1 contracts and ask: which AI vendor said no.

There is exactly one answer to that question. From what I have seen of how enterprise procurement really decides between AI vendors, that answer is going to compound. The companies that signed will spend the next year trying to explain that signing did not mean what people think it means. The company that refused will not have to explain anything.

The Mythos Paradox Proves the Leverage

The Defense Department reportedly continues to use Anthropic’s Claude Mythos Preview model for cybersecurity work, despite the formal procurement ban, which proves the federal government cannot fully replace Anthropic’s most advanced capabilities even with seven other vendors on retainer.

This is the part of the story that quietly demolishes the “loss” framing. The Pentagon did not just sign with seven competitors. It signed with seven competitors and kept using Anthropic’s hardest-to-replace model anyway. According to CNBC’s reporting on May 1, the Pentagon tech chief explicitly said Mythos was a “separate issue” from the procurement blacklist.

A separate issue, in procurement language, is what you call something the official policy cannot survive without. The seven winners are the official answer. Anthropic is the unofficial backstop the government keeps because nothing else can match the capability. That is not a loss position. That is a position of structural leverage.

The corollary is that any future negotiation between Anthropic and the federal government starts from a place where both sides know the government still depends on Mythos. The May 1 contracts do not strengthen the government’s hand in that negotiation. They weaken it, by exposing how thin the bench really is once Anthropic is excluded. Anthropic’s lawsuit filed in March is now sitting on top of that leverage.

The Part Nobody Wants to Admit

The uncomfortable truth is that the seven winners just demonstrated their AI safety policies are negotiable, and the long-term enterprise consequences of that demonstration will outweigh the short-term Pentagon revenue gain.

The companies that signed the May 1 contracts include several that have spent the past three years marketing themselves as AI safety leaders. OpenAI’s Responsible Scaling Policy, Google DeepMind’s Frontier Safety Framework, Microsoft’s Responsible AI Standard. Each of those documents includes specific commitments about non-deployment in high-risk contexts.

The seven winners signed a contract that explicitly permits Pentagon use of their models for “all lawful” purposes, including, by Anthropic’s reading, domestic mass surveillance and fully autonomous weapons. Either those commitments are legally binding (in which case the contract conflicts with them) or they are not (in which case the public framing was always marketing).

I would argue this is the part of the story that lands hardest in the regulated-industry buyer’s room. A bank deciding between Claude and a competitor for an internal data assistant has a new data point: the competitor signed off on use cases their own published policy said they would refuse. Anthropic is now the only vendor that, on the public record, declined to do that.

This is a moat that compounds. Every additional federal contract the seven winners sign tightens the contradiction. Anthropic, having paid the cost of refusing once, accrues the credibility benefit on every subsequent procurement story. The asymmetric return on a single principled refusal is exactly what makes this a contrarian opportunity.

Hot Take

The May 1 Pentagon contracts were the most valuable thing that has happened to Anthropic’s enterprise positioning in 2026, and Bloomberg, CNN, and Defense News all called it a defeat because they were measuring the wrong thing.

Federal procurement revenue is a small slice of frontier AI enterprise spend. The “AI vendor that said no” position is a permanent product. Anthropic just got handed the second one in exchange for missing out on the first. That is not a loss. That is the trade of the year.

The next twelve months will tell the story. Watch enterprise procurement decisions in regulated industries (finance, healthcare, journalism, legal) for accelerated Anthropic adoption framed as a trust signal. Watch the seven winners try to clarify their AI safety policies in ways that do not conflict with the contracts they just signed.

Watch the federal government, having lost the Mythos leverage, eventually negotiate Anthropic back in on terms closer to Anthropic’s original position than the Pentagon’s.

The mainstream story will be that Anthropic eventually “won back” the contract. The real story is that they never lost the part that mattered.

Read alongside the Pentagon contracts news piece for the underlying facts, the March lawsuit context, and the $900B valuation backdrop that frames the trade.