What Happened: The Pentagon awarded classified-network AI contracts to seven companies on May 1, 2026, and Anthropic was the one frontier lab left off the list. The exclusion stems from a supply chain risk designation Defense Secretary Pete Hegseth formalized in March. Claude users and Anthropic-built workflows now sit outside the largest single AI procurement of the year.

The Pentagon AI contracts announced on May 1, 2026 went to OpenAI, Google, Microsoft, Amazon Web Services, Nvidia, SpaceX, and a startup called Reflection AI. Anthropic, the maker of Claude and the company sitting on a reported $900 billion valuation, was the only frontier lab cut out.

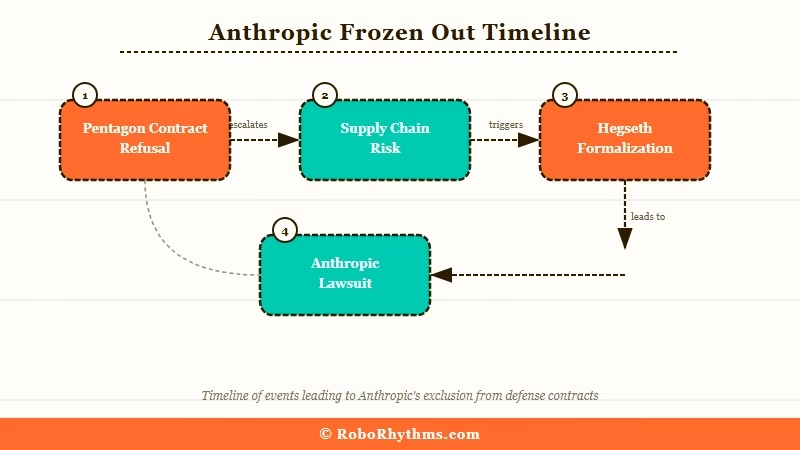

The exclusion is not a snub. It is a formal designation. The Trump administration tagged Anthropic a supply chain risk in February, Defense Secretary Pete Hegseth made it official in March, and the May 1 announcement is the operational consequence: classified Defense Department systems will run on AI from seven vendors, none of them Anthropic.

For anyone building on Claude, this matters more than a headline about a missed contract. The way I see it, this story is really three stories stacked on top of each other, and only one of them is about money. The other two are about whether refusing certain government use cases is now a strategic liability, and what happens to “Responsible AI” pledges when a real contract is on the table.

Here is what landed, why the contractual fight got Anthropic frozen out, and what the practical fallout looks like for developers, enterprise buyers, and anyone watching the federal AI vendor stack take shape.

What Actually Happened

The Pentagon awarded classified-network AI contracts to seven vendors on May 1, 2026, and Anthropic was excluded under a formal supply chain risk designation.

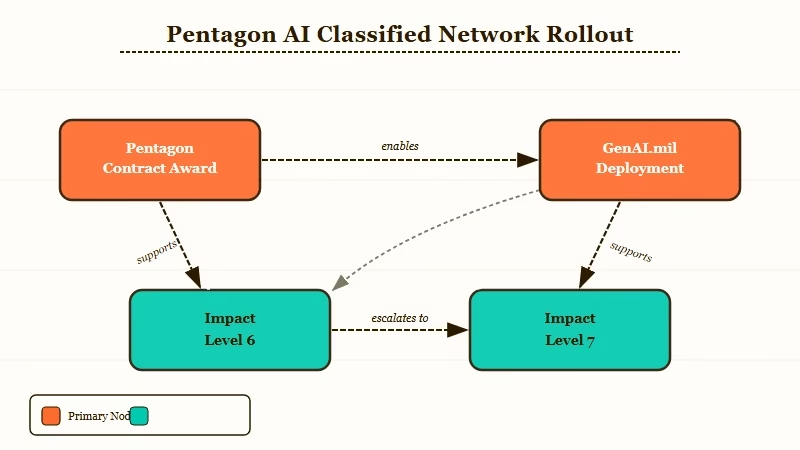

The seven companies named in the May 1 SiliconANGLE report are Amazon Web Services, Google, Microsoft, Nvidia, OpenAI, SpaceX, and Reflection AI. The deals cover deployment inside Defense Department systems classified at Impact Level 6 and Impact Level 7, which are the high-security environments designed to store and process classified information.

Products will be delivered through GenAI.mil, the Pentagon’s internal AI portal that has reportedly grown to more than 1.3 million Defense Department users since launch.

The reach of GenAI.mil is the part most coverage has glossed over. Personnel on that platform have already built hundreds of thousands of AI agents, which means the Pentagon is not buying a chatbot. It is buying the model layer underneath an internal agentic stack that is already in production.

Reflection AI, the only newcomer on the list, raised $2 billion last year and is run by former Google DeepMind researchers; the company plans to release a model trained on tens of trillions of tokens. SpaceX qualifies as an AI vendor here because of its 2026 merger with xAI Holdings, which gave Elon Musk’s space company control of the Grok family of models.

From my reading of the source coverage, what I found most telling is that none of the seven winners issued a public statement defending the contractual language Anthropic refused. Silence in this kind of procurement is not nothing. It is consent.

Why Anthropic Got Frozen Out

Anthropic was excluded because it refused to permit Pentagon use of Claude for “all lawful” purposes, on grounds that the language could enable domestic mass surveillance or fully autonomous weapons.

The fight was not abstract. The Pentagon’s standard contractual term required vendors to allow their models for any use that does not violate the law.

Anthropic argued that “all lawful” is a category broad enough to cover surveillance of US citizens and the deployment of Claude inside lethal autonomous systems, both of which conflict with the company’s stated policy. The Defense Secretary disagreed, escalated, and the supply chain risk designation followed.

That designation has teeth. It does not just block the Pentagon from buying Claude directly. It also limits the access that defense contractors have to Anthropic’s models, which is how a single procurement label radiates outward into the entire industrial base. Any contractor working on a defense program now has to weigh whether Claude in their stack creates compliance friction, even on non-classified work.

Anthropic filed a lawsuit in March asking a federal judge to overturn the designation. The case is still active.

In April, Trump’s chief of staff Susie Wiles met CEO Dario Amodei at the White House, and the President told CNBC afterwards that a deal “is possible.” From what I have seen of past administration positioning, “possible” is doing a lot of work in that sentence. The May 1 contracts went out anyway.

The deeper irony is that the Defense Department is reportedly still using one specific Anthropic model. Anthropic’s internal compliance posture was already under industry pressure earlier this spring, and the March Pentagon lawsuit made clear how seriously the company is treating the legal fight.

The Mythos Paradox

The Defense Department reportedly continues to use Anthropic’s Claude Mythos Preview model for cybersecurity work despite the formal ban on Anthropic procurement.

This is the part of the story that does not square. Claude Mythos Preview is the unreleased internal model Anthropic has held back from public access because, by the company’s own assessment, it is unusually good at finding zero-day cybersecurity vulnerabilities. The DoD apparently still has that capability through some workaround channel, even while declining to award Anthropic any official seat at the May 1 table.

In practice, that means the federal government has decided two contradictory things at once. It has decided Anthropic is a supply chain risk too dangerous to procure from, and it has also decided that Anthropic’s most advanced model is too useful to give up.

The official explanation, per Pentagon tech chief commentary reported on CNBC, is that Mythos is a “separate issue” from the procurement blacklist. From what I have seen of this kind of carve-out, separate issues tend to harden into formal exceptions over time.

The reason this matters for ordinary readers is that it tells you what the actual leverage is. Anthropic is not being punished for being weak. It is being punished for refusing the contractual language other vendors accepted, while the government simultaneously relies on the company’s hardest-to-replace capability. That is a position of strength, dressed up as a setback.

What This Means for You

Individual Claude users and developers will not see immediate API changes, but enterprise procurement, government-adjacent work, and “Claude-native” agent stacks now carry quiet new compliance weight.

For most readers building on Claude, nothing breaks today. The API still works and pricing did not change. If you are using Claude inside a personal project or a non-regulated product, the May 1 contracts barely register.

The friction shows up further upstream. Anthropic is still shipping models on its normal cadence, with Opus 4.7 from April the most recent release, so this is a procurement story rather than a product story.

Here is how I would think about it, ranked by how soon you should care:

| Use case | Impact today | Why it matters |

|---|---|---|

| Personal projects, indie apps | None | API access is unchanged |

| SMB enterprise tooling | Low | Most procurement teams will not flag the designation |

| Regulated industries (finance, healthcare) | Medium | Some compliance teams default to federal vendor lists |

| Defense-adjacent contractors | High | Designation flows through the supply chain |

| Federal agency workflows | Total | Anthropic procurement is barred outright |

The middle tier is the one to watch. Big enterprises tend to copy federal vendor lists into their own approved-tool registries, which means a “supply chain risk” stamp can echo into private-sector procurement six to twelve months out, even though the designation has zero legal weight outside DoD contexts.

That is the chilling effect, and it is the part of this story that most coverage skipped.

Practical translation: if your stack is “Claude-native” and you sell into regulated enterprises, this is the quarter to check what your procurement-facing customers think about the federal designation.

From my experience with similar situations, the conversation goes a lot better when you have already mapped a fallback model than when you are scrambling after a customer raises it.

Why This Is a Bigger Deal Than It Sounds

The May 1 contracts effectively split the AI industry into two tiers based on willingness to accept federal use clauses, and that split will outlive any individual administration.

What I would argue is the most under-discussed angle here: the seven winners agreed to terms Anthropic refused. None of them have publicly clarified whether their stated AI safety policies have been quietly relaxed to fit the Pentagon’s “all lawful” language, or whether they accepted the language and will manage the conflict case-by-case.

That is the kind of contractual fine print that gets revisited only when something goes wrong, which means the answer will probably arrive in the form of a leaked memo two years from now.

The financial backdrop makes the calculus tighter. Anthropic’s valuation surge past OpenAI was driven in part by enterprise revenue projections, and federal contracts are a meaningful slice of that addressable market.

Walking away from them on principle is defensible if the principle holds. It gets harder to defend if the next funding round expects defense revenue and the door is bolted shut.

Other governments will have to make the same call. Five Eyes partners, NATO allies, and EU procurement officers all watch what the US does.

If the supply chain risk designation propagates internationally, Anthropic’s enterprise revenue ceiling drops noticeably. If it stays a US-only dispute, the company has time to litigate or negotiate its way back in.

From what I have seen of past US tech sanctions cycles, allies tend to wait for the courts before they pick a side.

What Comes Next

The active lawsuit, the four propagation signals to watch, and a small but non-zero practical move for anyone with Claude in their stack.

The lawsuit Anthropic filed in March is still on the docket. A White House deal is officially “possible,” officially ongoing, officially undefined.

The May 1 contracts run regardless of how that meeting plays out, which means the operational outcome is already locked in for at least the next budget cycle.

In the near term, here are the four signals I would watch:

- Whether any of the seven winners disclose terms changes to their AI safety policies.

- Whether other federal agencies copy the Defense Department designation.

- Whether private sector enterprises mirror it inside their own approved-vendor lists.

- Whether the Five Eyes alliance harmonises with the US position or diverges from it.

Those four signals will tell you whether this story is a one-off procurement fight or the opening move in a permanent reshaping of the federal AI vendor stack.

For most readers, the practical move is small but not zero. If your work touches federally-regulated industries or government-adjacent customers, this is a good week to sketch out what a fallback from Claude to OpenAI or Google would really look like inside your stack.

Not because you need to switch. Because the conversation might come up, and “I have not thought about it” is a worse answer than “here is the migration plan, and here is why I do not need it.”

Before: A single-vendor Claude integration with no documented alternative model.

After: A vendor-flexible integration with Claude as the primary model and OpenAI or Gemini as a tested fallback for regulated client requests.

The May 1 contracts did not break Claude. They just made the question of “what if you had to switch” a real question instead of a hypothetical.