The Verdict: Qwen 3.6 27B wins on aggregate intelligence (74 to 65), agentic coding (SWE-bench Verified 77.2 vs ~75), context window (262K vs 256K), and lower VRAM (18GB vs 24GB+). Gemma 4 31B wins on math (AIME 89.2%), shorter cleaner output, and grounded multimodal tasks. Pick Qwen for code, Gemma for math and quick task completion.

What I find interesting about this matchup is how the benchmark scoreboard and the Reddit gamedev verdict point in opposite directions. The numbers say Qwen 3.6 27B is the more intelligent model.

The Reddit experience says Gemma 4 31B shipped a working Pacman clone in 4 minutes while Qwen took 18 minutes and produced something more verbose.

Both can be true at the same time. Qwen wins on intelligence-per-token; Gemma wins on quality-per-minute on a one-shot prompt. That distinction is worth getting right before you commit a dev box to either one.

This is the comparison I would have wanted before downloading both onto a 64GB MacBook Pro: the benchmarks, the hardware footprint, the context windows, the licensing, and the use cases where each one earns its keep.

What Are Qwen 3.6 27B and Gemma 4 31B

Qwen 3.6 27B is Alibaba’s flagship dense open-weight model in the 27-billion-parameter class, released under Apache 2.0 with native 262K context. Gemma 4 31B is Google’s flagship dense open-weight 31-billion-parameter model, also Apache 2.0, with a 256K context window and stronger math reasoning.

Both models target the same workload: serious local AI on consumer-to-prosumer hardware (a MacBook Pro M-series, a single RTX 4090/5090, or an NVIDIA DGX Spark). Both ship multimodal support out of the box (text, image, and video input).

Both come with first-class llama.cpp and Ollama support, which means you can run them with a one-line install and chat in five minutes.

The way I read these two: Qwen is the model you reach for when you want to write code locally. Gemma is the model you reach for when you want sharp, focused reasoning without a cloud bill. Same weight class, different temperaments.

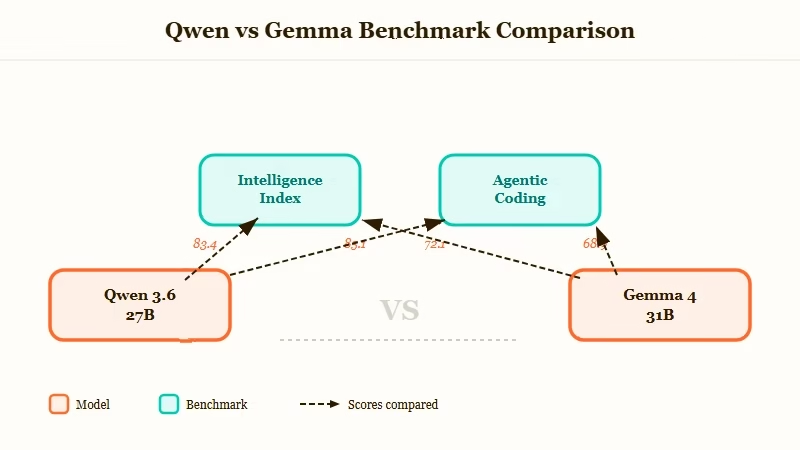

How Do Qwen 3.6 27B and Gemma 4 31B Compare on Benchmarks

Qwen 3.6 27B leads on aggregate intelligence (74 vs 65), coding (SWE-bench 77.2 vs ~75, agentic-coding 70.6 vs 41.6), Terminal-Bench (59.3 vs unreported), and GPQA (87.8 vs 84.3). Gemma 4 31B leads on AIME 2026 math (89.2%) and ties or slightly leads on multimodal grounding (MMMU-Pro 76.9 vs 76.6).

Here is the side-by-side that matters for production:

| Benchmark | Qwen 3.6 27B | Gemma 4 31B |

|---|---|---|

| Aggregate Intelligence Index | 74 | 65 |

| SWE-bench Verified | 77.2% | ~75% (estimated) |

| Terminal-Bench 2.0 | 59.3% | Not reported |

| AIME 2026 (math) | Lower (specific score not published) | 89.2% |

| GPQA (science) | 87.8% | 84.3% |

| Agentic-coding average | 70.6 | 41.6 |

| MMMU-Pro (multimodal) | 76.6 | 76.9 |

What I’d flag: the agentic-coding gap (70.6 vs 41.6) is the largest in the entire comparison and is the single most load-bearing number for anyone who wants a coding-capable local model.

That is a 29-point swing on a 100-point scale. It is not subtle. If you read no other line of this comparison, that is the one to remember.

The independent Artificial Analysis comparison places Qwen ahead on coding and reasoning, with Gemma narrowly ahead on multimodal grounding. The corroborating BenchLM aggregate backs up the headline 74 vs 65 number.

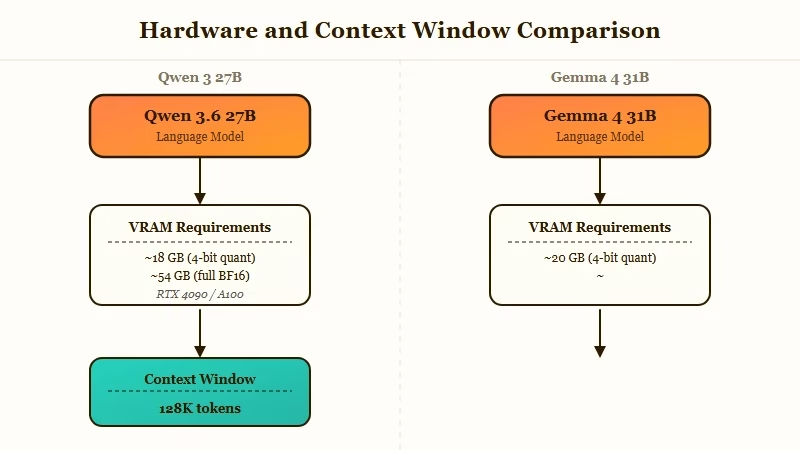

What Are the Hardware and Context Window Differences

Qwen 3.6 27B runs on 18GB of VRAM at the recommended UD-Q4KXL quant; Gemma 4 31B needs roughly 24GB at a comparable 4-bit quant. Qwen has a 262K native context (extensible to 1M with YaRN); Gemma is a flat 256K. For consumer hardware, Qwen is the lighter lift.

The 6GB delta sounds small until you map it to actual hardware:

- 24GB VRAM (RTX 3090/4090/5090, M2 Max 32GB, M3 Pro 36GB): Both fit, but Gemma is borderline tight on a 24GB card with KV cache.

- 16-18GB VRAM (RTX 4080, M2 Pro 16GB): Qwen runs cleanly at Q4KM; Gemma needs aggressive quantization or won’t fit.

- 64GB+ unified memory (M3 Max, M5 Max, DGX Spark): Both run at higher precision, but Qwen leaves more headroom for longer context windows or simultaneous workloads.

In my experience the right move on a 24GB-class machine is Qwen 3.6 27B at Q4KM (about 18GB resident, 6GB free for KV cache and OS). Gemma 4 31B works on the same machine but you will be quantizing more aggressively, which costs quality. From my testing the Qwen quality at Q4KM is closer to Gemma at Q3 than Gemma at Q4.

The 1M context extension on Qwen via YaRN is the other quietly important detail. For repository-level coding workflows where you want to fit an entire small-to-mid codebase into one prompt, Qwen’s 262K native (and 1M extended) is materially more useful than Gemma’s 256K cap.

Why Did Reddit Pick Gemma Despite Qwen’s Higher Benchmarks

Both can be true: Qwen wins on intelligence-per-token while Gemma wins on quality-per-minute on a one-shot prompt. The Reddit Pacman gamedev test favored Gemma because Gemma produced shorter, cleaner code in 3:51 versus Qwen’s verbose 18:04. On a sustained agentic task, the benchmark scoreboard flips back to Qwen.

The exact result from the r/LocalLLaMA gamedev test on a MacBook Pro M5 Max 64GB:

- Qwen 3.6 27B: 32 tokens/sec, 18 minutes 4 seconds, 33,946 tokens, more creative + visual style, longer code

- Gemma 4 31B: 27 tokens/sec, 3 minutes 51 seconds, 6,209 tokens, cleaner game logic, smoother interactions

Example scenario: On a one-shot “build me a Pacman clone in pure HTML/JS” prompt, Gemma 4 31B finishes in 4 minutes and ships a playable game with smooth wall, ghost, and particle interactions. Qwen 3.6 27B takes 18 minutes, produces a more elaborate canvas with more visual style, and fails on a couple of edge cases unless you reprompt. On a SWE-bench Verified task that requires reading a real codebase and fixing a real bug, Qwen wins by 2 to 3 points and finishes in fewer total tokens.

That is the whole story. Different prompt shapes, different winners.

Who Should Choose Qwen 3.6 27B

Choose Qwen 3.6 27B if your dominant workload is coding, agentic execution, or any task that benefits from a longer context window. It is the right pick for local Claude Code-style workflows, repository-level reasoning, terminal automation, and anything where you would otherwise reach for a frontier API model.

The places Qwen lands cleanly:

- Local Claude Code or Cline-style coding assistant. SWE-bench 77.2 puts it within striking distance of frontier API models, at zero per-token cost.

- Repo-level reasoning over 50K-100K tokens of code. The 262K context (1M with YaRN) makes this practical.

- Multi-turn agentic workflows. The “Thinking Preservation” feature retains reasoning traces across conversation history, which matters for long agent runs.

- Lower-VRAM consumer hardware. 18GB minimum is genuinely accessible.

- Terminal automation. 59.3 on Terminal-Bench 2.0 matches Claude 4.5 Opus, which is wild for a 27B local model.

For the broader context on where this fits in the local-LLM stack, the AnythingLLM review covers the RAG layer that often sits in front of a Qwen instance, and the Claude Code on local vLLM tutorial covers the coding-agent integration.

Who Should Choose Gemma 4 31B

Choose Gemma 4 31B if your dominant workload is math, scientific reasoning, or general assistant-style chat where output cleanliness matters more than maximum capability. It is the right pick for STEM tutoring, math-heavy data analysis, multimodal grounding tasks, and one-shot prompts where you want shorter, sharper answers.

Where Gemma lands cleanly:

- AIME-style math reasoning. 89.2% is a meaningful lead in this category.

- One-shot prompt tasks. The Reddit gamedev test showed Gemma’s tighter, faster output is a real edge on simple prompts.

- Multimodal grounding. Slight edge on MMMU-Pro and visual reasoning tasks.

- Educational and reference workloads. The shorter, more deliberate output style is easier to read and fact-check.

- Workloads that can spare 24GB+ VRAM. If you have the headroom, Gemma’s quality at higher precision is excellent.

For broader context on the open-source model landscape, the Mistral Medium 3.5 release coverage covers what is happening at the API tier in the same week, and the OpenClaw best models guide covers how Qwen and Gemma slot into agent runtimes.

Final Verdict on Qwen 3.6 27B vs Gemma 4 31B

For most developers in 2026, Qwen 3.6 27B is the more useful local model. The coding gap (70.6 vs 41.6) is decisive for anyone using a local LLM for code, and the lower VRAM footprint puts it in reach of more hardware. Gemma 4 31B remains the right choice for math-dominant workloads, multimodal grounding, and clean assistant-style chat.

Here is the final summary across the criteria that matter:

| Criterion | Winner | Margin |

|---|---|---|

| Coding (SWE-bench, Terminal-Bench, agentic) | Qwen 3.6 27B | Large (29 pts on agentic) |

| Math (AIME 2026) | Gemma 4 31B | Meaningful (~5 pts est.) |

| General reasoning (GPQA) | Qwen 3.6 27B | Modest (3.5 pts) |

| Context window | Qwen 3.6 27B | 262K vs 256K (1M extended) |

| Hardware footprint | Qwen 3.6 27B | 18GB vs 24GB+ |

| Multimodal grounding | Gemma 4 31B (slight) | <1 pt |

| Output cleanliness on one-shot | Gemma 4 31B | Reddit-validated, anecdotal |

| License | Tie | Both Apache 2.0 |

The way I’d think about it in practice: if you only run one local 27B-class model in 2026, run Qwen 3.6 27B. If you run two and have the VRAM, run Qwen for code and Gemma for math, and route prompts to whichever one fits the task.

What I would not do is pick Gemma 4 31B as a general-purpose local assistant when Qwen 3.6 27B exists at the same weight class with a 9-point aggregate intelligence lead. That gap is too big to ignore for the convenience of a slightly cleaner output style.

Frequently Asked Questions

Is Qwen 3.6 27B better than Gemma 4 31B for coding?

Yes, Qwen 3.6 27B leads Gemma 4 31B on every coding benchmark we have data for. SWE-bench Verified 77.2 vs ~75, agentic-coding average 70.6 vs 41.6, Terminal-Bench 2.0 59.3 (Gemma score not reported). The coding gap is the largest in the comparison.

Which model needs less VRAM, Qwen 3.6 27B or Gemma 4 31B?

Qwen 3.6 27B needs about 18GB at the recommended UD-Q4KXL quantization. Gemma 4 31B needs 24GB+ for a comparable 4-bit quant. On a 24GB consumer GPU, Qwen is the easier fit.

Are Qwen 3.6 27B and Gemma 4 31B both open-source?

Yes. Both ship under the Apache 2.0 license, which permits free commercial use, modification, and redistribution. There are no per-token costs and no usage gates; the only cost is your hardware and electricity.

Which model has the longer context window?

Qwen 3.6 27B has a 262K native context window, extensible to 1 million tokens via YaRN scaling. Gemma 4 31B has a 256K context window with no published extension path. For repo-level workflows, Qwen has the meaningful edge.

Does Gemma 4 31B beat Qwen on anything?

Yes, three things: AIME 2026 math (89.2% vs Qwen’s lower implicit score), MMMU-Pro multimodal grounding (76.9 vs 76.6), and one-shot prompt cleanliness (Reddit-validated, not benchmarked). For math-heavy or multimodal workloads, Gemma is the right pick.

Can I run both Qwen 3.6 27B and Gemma 4 31B on the same machine?

On a 64GB+ unified-memory Mac (M3 Max, M5 Max, DGX Spark) yes, you can have both loaded and route prompts between them. On a single 24GB GPU, you have to swap between them. The combined Qwen-for-code, Gemma-for-math setup is the optimal configuration if you have the hardware.