TL;DR: A multi-agent system is a distributed system, not a chatbot. Treat each agent like a microservice with a typed contract, pick one of four named orchestration patterns (orchestrator-worker, sequential, parallel, loop), and budget for the reliability-compounding penalty: ten 95% agents chained equals a 60% system. Skip the typed handoffs and you ship a debugging nightmare.

I keep watching teams build multi-agent systems by stacking prompts on top of prompts, then flame out two weeks into production when nothing is debuggable. The fix is not better prompting. It is borrowing 30 years of distributed-systems hard-won lessons and applying them to agents.

What I have landed on, after watching enough of these systems break, is that a production multi-agent setup looks more like a microservices stack than a chatbot. Specialized agents, typed handoffs, structured logging, and one of four named orchestration patterns chosen on purpose, not by accident.

This piece is the playbook I wish someone had given me a year ago. The reliability math, the cost math, the four patterns, what typed handoffs look like in real code, and the failure modes that kill systems even when each individual agent is doing its job.

What Does It Mean to Build a Multi-Agent System Like a Distributed System

A multi-agent system is a distributed system the moment more than one agent is in the loop, which means it inherits every problem distributed systems already solved (typed contracts, identity, provenance, replayability) and every problem they did not solve cheaply (reliability compounding, partial failures).

The mindset shift is concrete. In a single-prompt agent, you have one model, one tool list, one place to debug.

You either get the right answer or you do not. In a multi-agent system, you have specialized agents passing structured data to each other, and the same questions an SRE asks of a microservices stack now apply to your agents:

- Which agent produced this output, by version and deployment?

- Did it have permission to access the data it cited?

- If the synthesis is wrong, can you replay the exact decision chain?

- What does it mean for one agent to fail while the others succeed?

That is the lens. Once you adopt it, the rest of the design follows. According to InfoWorld’s analysis, this is exactly the architectural inflection point the industry crossed in 2026, with Gartner reporting a 1,445% surge in multi-agent system inquiries between Q1 2024 and Q2 2025.

Why Single-Prompt Agents Stop Working at Around Three Tool Calls

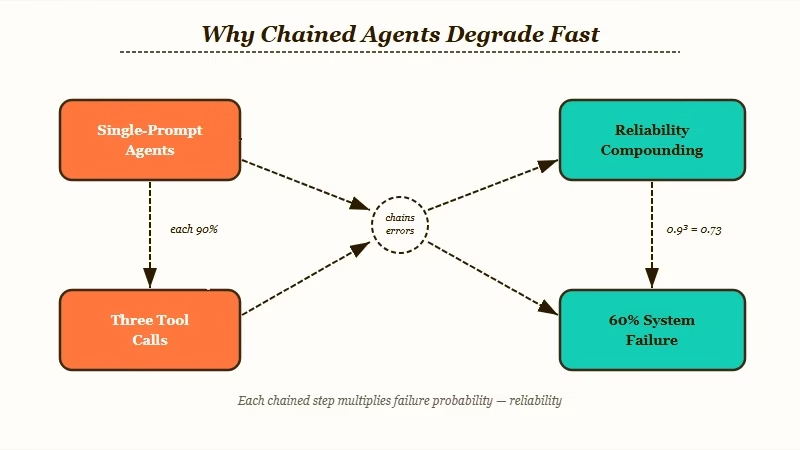

Single-prompt agents fail at scale because of the reliability-compounding tax: chain ten 95% agents and the system runs at 60% reliability, not 95%, since errors multiply on every handoff.

The math is what kills you. A single agent at 95% reliability seems fine.

A chain of five takes you to roughly 77%. A chain of ten drops you to 60%.

From my read this is the single most under-discussed number in the multi-agent space, because most demos run with two or three agents and look bulletproof.

The other quiet killer is the “three tool calls deep” debugging problem. Once something goes wrong inside a long monolithic prompt, you are staring at a blob of text with no idea where the failure happened, whether it was reasoning or tool execution, or whether the contract itself was wrong. Every developer who has built one of these has lived through it.

The fix is to think in failure boundaries, not prompts. Specialize each agent. Make the handoffs typed.

Log every boundary crossing. The result is a system you can debug like a service stack, not a system you stare at hoping it works.

What Are the Four Named Multi-Agent Orchestration Patterns

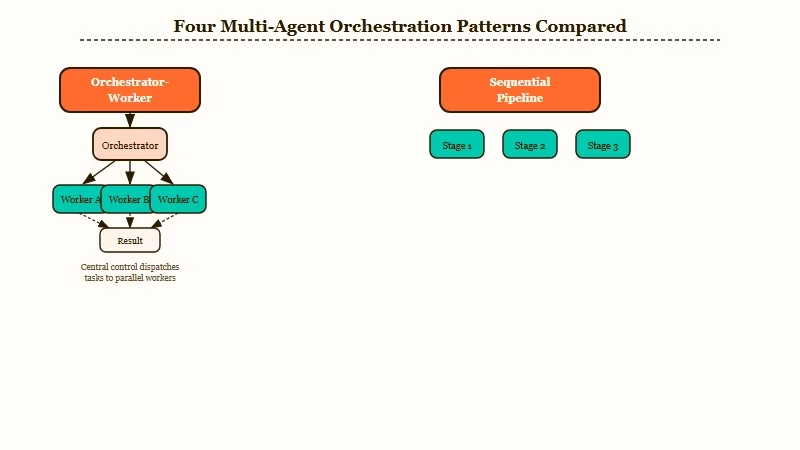

The four production patterns are orchestrator-worker (hierarchical delegation), sequential pipeline (fixed order), parallel split-and-merge (fan-out to specialists), and planner-generator-evaluator loop (iterative refinement). Pick one on purpose; do not stack them by accident.

Here is how I’d think about which pattern fits which job:

| Pattern | When to use | Watch out for |

|---|---|---|

| Orchestrator-Worker | Multi-step research, ticket triage, anything with clear sub-tasks | Orchestrator becomes the bottleneck on context window |

| Sequential pipeline | Strict A then B then C order (intake, enrich, score, output) | 6 to 9 seconds of dead air, fatal for voice or interactive UX |

| Parallel split-merge | Independent specialist tasks (bull/bear, multi-source research) | Synthesis step is where production demos quietly die |

| Planner-generator-eval loop | Code generation, content drafts, anything with a quality bar | Infinite-loop risk, must cap retries explicitly |

In my experience the orchestrator-worker pattern is the right default for most production cases. It gives you debuggable traces because the orchestrator’s plan is the audit trail.

The plan-and-execute variant of this, where an expensive model plans and cheaper models execute, can yield serious cost reductions, with Chanl’s production analysis reporting up to 83% savings (a $0.009 request dropping to $0.0015) on the right shape of workload.

Parallel patterns earn their keep when the sub-tasks really are independent. Bull/bear analysis, multi-source research, adversarial generation. The trap is that “synthesis” is where most parallel demos quietly fail in production.

If your bull and bear genuinely disagree, what is the synthesizer’s rule? Tie-break, weight, defer, surface conflicts? The right answer is usually “surface the conflicts as structured output”, not “decide the answer”. Most teams skip this and ship a synthesizer that confabulates an average.

How Do Typed Handoffs Work in Real Code

Typed handoffs replace free-text agent-to-agent messaging with schemas (JSON, Pydantic, Zod) so each agent knows exactly what shape of data it owes the next, eliminating the entire class of “blob of text” debugging failures.

A typed handoff is a contract. The bull agent does not return prose; it returns a structured object with fields like claims, evidence, risks, assumptions, confidence.

The bear agent returns the same shape. The synthesizer receives both objects and operates on the schema, not the text.

Here is what that looks like compared to the lazy version:

Before (free-text handoff that kills you in production):

bull_agent_output = "I think this is a great investment because growth has been strong and..."

synthesizer_input = bull_agent_output + bear_agent_output

synthesizer_prompt = "Decide which side is right based on the above."After (typed handoff that survives debugging):

class AgentVerdict(BaseModel):

position: Literal["bull", "bear"]

claims: list[str]

evidence: list[Evidence]

risks: list[Risk]

confidence: float # 0.0 to 1.0

bull_output: AgentVerdict = bull_agent.invoke(task)

bear_output: AgentVerdict = bear_agent.invoke(task)

synthesis_input = ConflictPair(bull=bull_output, bear=bear_output)

synthesis_output: SynthesizedReport = synthesizer.invoke(synthesis_input)

# synthesizer must return surface_conflicts: list[Conflict], not a single answerThe schema gives you four things free-text cannot: validation at every boundary, replayability with exact inputs, graceful degradation when one agent’s output is malformed, and the ability to swap in a different agent without rewriting the rest of the pipeline.

From my testing this is the single change that takes a multi-agent system from “lab demo” to “ships to production”.

If you are running this on top of automation infrastructure rather than building from scratch, Make.com handles the typed-payload routing between LLM steps natively, with built-in JSON validators on every connection. That covers the orchestration plumbing without forcing you to write a custom event bus.

What Are the Failure Modes Nobody Warns You About

The three quiet killers of multi-agent systems in production are reliability compounding, the “passing ships” coordination failure, and the 15x token tax. Each one is invisible in a two-agent demo and dominant in a ten-agent stack.

Quick reference for which failure mode bites you when:

| Failure mode | Symptom | Fix |

|---|---|---|

| Reliability compounding | System reliability decays as you add agents | Shorten chain, add evaluator gate, retry + checkpoint logic |

| Passing ships | Agents succeed individually, collective outcome is broken | Shared state store, optimistic locking, reconciliation step |

| 15x token tax | Costs balloon vs the demo math | Plan-and-execute variant, summary-only handoffs, batch where possible |

| Specification drift | 42% of failures trace to misunderstood user intent | Make user intent the first typed contract, not the last |

| Synthesis confabulation | Parallel agents disagree, synthesizer fabricates a middle | Synthesizer must surface conflicts, not pick a single answer |

The reliability compounding math I covered above. Plan for it from the start.

If your business case requires 95% reliability and you have ten agents in a chain, your individual agents need to run at 99.5%, which is not realistic. Either shorten the chain, add explicit retry plus checkpoint logic, or add an evaluator agent that gates on quality.

The “passing ships” failure mode is sneakier. Two agents both perform their tasks correctly in isolation, but the collective outcome is broken.

The textbook example: a customer support multi-agent system where the refund agent processes a refund and the replacement agent simultaneously orders a replacement, both succeeding, both unaware of each other. The customer ends up with a refund and a free product.

The fix is a shared state store with optimistic locking, exactly the pattern microservices learned the hard way.

The token tax is the third one. Multi-agent systems consume 15x more tokens than a comparable chat interaction, versus 4x for a single agent, per MindStudio’s orchestration breakdown.

That is the number to put in your business case before you ship. From my read, most teams discover this after the second invoice and then scramble to add a plan-and-execute variant retroactively.

How Do You Add Identity, Auth, and Provenance to Agents

Treat every agent like a service with its own credential, scope, and audit log. Without per-agent identity, your execution trace shows what happened but not who did it, which means in production you can detect failures but cannot assign or fix them.

The minimum production-grade identity layer for a multi-agent system has four pieces:

- Per-agent identity, including version and deployment ID, attached to every output.

- Scoped auth, where each agent has only the permissions it needs (the refund agent can refund but not order; the replacement agent can order but not refund).

- Provenance metadata on every claim, including which agent produced it, which tools it called, and which inputs it received.

- Replayability, meaning the system can deterministically reconstruct the exact decision chain from logs alone.

Once those pieces are in place, your debugging story changes from “stare at a prompt” to “pull the trace, find the failing boundary, fix the contract or the agent, redeploy”. That is the engineering experience worth aiming for.

It is also why I’d put orchestration on top of an automation platform like Make.com or a custom agent framework that handles auth at the boundary, rather than baking it into prompt strings.

For broader context on how the agent-ops landscape is changing, our piece on the OpenAI agent builder workflow covers the OpenAI Agents SDK side of this; the Claude Code subagents tutorial covers the parallel-pattern angle in the Anthropic stack; and the API-cost reduction guide covers the 90/10 routing rule that pairs well with the plan-and-execute variant.

Frequently Asked Questions

What is the difference between a multi-agent system and a chatbot?

A chatbot has one model handling everything in a single prompt; a multi-agent system has specialized agents passing structured data through typed handoffs. The multi-agent version trades simplicity for debuggability, scale, and the ability to assign accountability to a specific agent when things go wrong.

Which multi-agent framework should I use in 2026?

LangGraph for production-grade systems with complex branching and a need for deterministic execution. CrewAI for faster prototyping with role-based agents. OpenAI Agents SDK if you are already deep in the OpenAI ecosystem and accept vendor lock-in. AutoGen is in maintenance mode and not a 2026 default.

How much do multi-agent systems cost compared to single agents?

Multi-agent systems use roughly 15x more tokens than a comparable chat interaction, versus 4x for single agents. Plan-and-execute variants (expensive planner, cheap executors) can claw back up to 83% of that cost on the right shape of workload.

What is the biggest reason multi-agent systems fail in production?

Reliability compounding plus untyped handoffs. Each agent in a chain multiplies error rates, so ten 95%-reliable agents produce a 60% system. Untyped free-text handoffs make every failure undebuggable, which compounds the operational pain.

Do I need a vector database for a multi-agent system?

Not necessarily. For short-running agent workflows, a typed scratchpad in shared state is enough. Vector memory matters when agents need to recall structured facts across sessions or run for days, not when they hand off in seconds inside a single workflow.

How do I prevent two parallel agents from conflicting with each other?

Add a shared state store with optimistic locking and an explicit reconciliation step. Without that, you hit the “passing ships” failure mode where both agents succeed individually but produce a broken collective outcome. The microservices playbook applies directly here.