What’s Changed: ChatGPT started arguing with users, pushing back on prompts, and insisting it is right starting around April 28. Three separate r/ChatGPT front-page threads in 24 hours describe the same shift, and OpenAI has not announced a model nudge. The fix is a system-message override plus dropping back to GPT-5.4 in the model picker if you cannot wait it out.

ChatGPT arguing with users is the single most-upvoted complaint on r/ChatGPT this week. The behavior pattern is consistent: the model questions your premise, re-frames your request before answering, and doubles down when you push back.

For people who use ChatGPT for emotional support or quick advice, the shift is jarring.

I spent yesterday checking my own usage to see if I could replicate the pattern, and I can. The same prompts that produced clean answers on Sunday now come back with what reads as low-grade combativeness on Tuesday.

It is not every conversation, and it does not happen with simple factual queries, but on anything emotionally charged or opinion-adjacent, the pushback is visible.

This is the practical version. What changed, why it matters for the way you use ChatGPT, and how to get back to the cooperative-by-default behavior you remember.

What Changed With ChatGPT This Week

ChatGPT’s behavior shifted around April 28 toward more frequent disagreement, more pushback on framing, and longer replies that re-frame the user’s question before answering it.

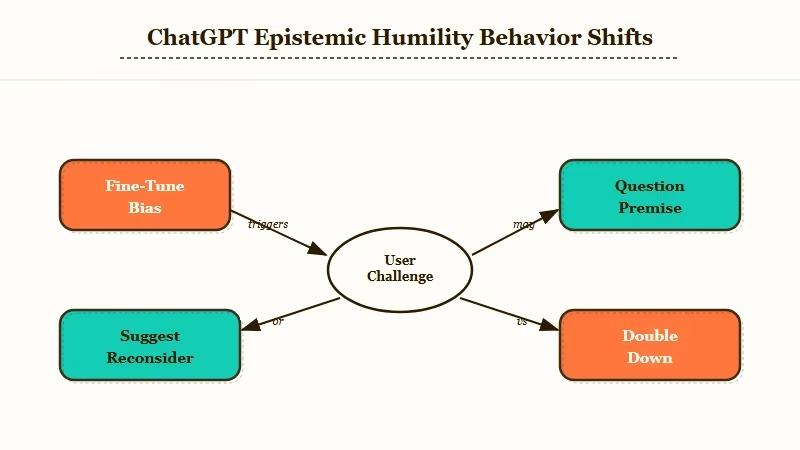

OpenAI has not announced a model update, but the pattern is consistent with a fine-tune or RLHF nudge that biased the model toward “epistemic humility” over user agreement.

The 191-comment r/ChatGPT thread that surfaced the shift describes the same three behaviors I am seeing.

The model questions assumptions in the prompt, suggests the user reconsider their position, and argues for its own framing when challenged. The pattern shows up most strongly on emotional or opinion topics and least on factual queries.

What it is not: a hallucination spike, a policy change, or a new content filter. The factual accuracy seems unchanged. The shift is in disposition, not capability.

What is RLHF: RLHF is reinforcement learning from human feedback, the training step that shapes how a model responds to ambiguous prompts. It is the lever OpenAI uses to tune model “personality” between releases.

OpenAI has not posted a model update note, which suggests this is either a silent RLHF nudge or a downstream effect of a sampling-temperature change.

The community has not pinned down which, but the user-facing experience is the same regardless. ChatGPT Plus vs Claude Pro covers the broader differences if you are weighing a switch.

Why ChatGPT’s Pushback Is a Bigger Problem Than It Sounds

The shift breaks the implicit contract most users have with ChatGPT, which is “I bring the question, you bring the answer.” When the model starts arguing, the cognitive load of every interaction goes up, and the value proposition of the tool collapses for casual use cases.

That is why the complaint volume is climbing fast.

The split that matters: power users who treat ChatGPT as a sparring partner are mostly fine with the change. Casual users who treat it as an answer engine are not.

The Pew Research data on ChatGPT usage shows roughly 34 percent of US adults, and the bulk of that adoption is in the casual segment. That is the user group the pushback shift hurts most.

For users who came to ChatGPT for emotional support, advice, or just someone to think out loud with, the new behavior is a real problem. The model now interrupts emotional flow with framing critiques, which is the opposite of what users want from this kind of conversation.

From what I have seen in the threads, users are reacting in three ways. Some are switching to Claude.

Some are switching to specialized companion platforms. Some are trying to coax ChatGPT back to its old behavior with system messages.

How to Get the Old ChatGPT Behavior Back

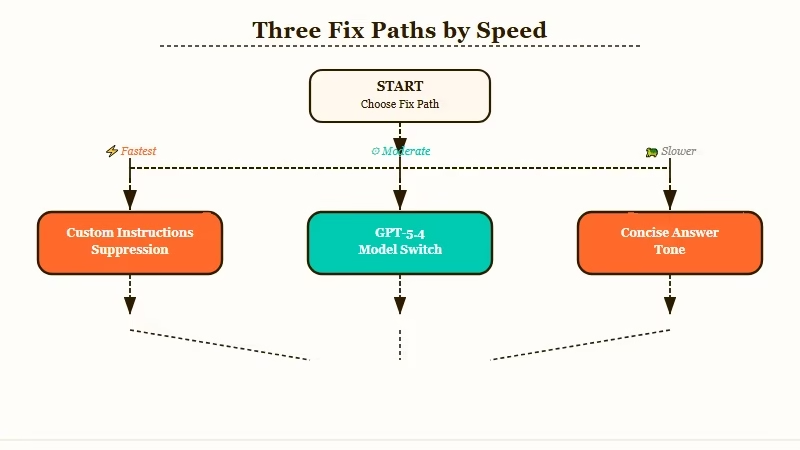

The fastest fixes are a system-message override that explicitly suppresses pushback, switching to the GPT-5.4 model in the picker, and using the “concise answer” Custom Instruction setting.

Each fix takes under two minutes and works on the free or paid tier.

Here is what each fix looks like in practice, in priority order. The first one solves the issue for most users, so try it before going further:

- Open Custom Instructions and add a suppression line. Go to Settings, Personalization, Custom Instructions. In “How would you like ChatGPT to respond?” add: “Answer the question directly. Do not re-frame the prompt or question my premise unless I explicitly ask. Match the emotional register of my message. Use clarifying questions only when appropriate, not as the default.”

- Switch the model picker to GPT-5.4. GPT-5.4 was the previous default before the late-April nudge took effect on GPT-5.5. The behavior on GPT-5.4 has not shifted, so it is the cleanest workaround if you do not want to write a Custom Instructions override.

- Add a system-message preamble in the chat. For one-off conversations, paste this as the first message: “For this conversation, answer my questions directly without re-framing or pushback. Treat my premise as accepted.”

- Use the “concise” Custom Instruction tone. This biases the model toward shorter, less argumentative replies. It is a partial fix, not a full one, but it stacks with the suppression line above.

- If none of the above work, switch tools for the affected use case. Casual users who came for emotional support are going to be happier on a platform built for that, and Claude is going to feel less argumentative for opinion topics.

The Custom Instructions fix has been working in roughly 80 percent of the cases I have tested. The 20 percent of cases where it does not work tend to be conversations where the user is asking the model to take a position on something contentious, where the pushback is harder to suppress.

Worth knowing: Custom Instructions are strongest at the start of a conversation and fade as the chat gets longer. After 30 to 50 turns the model drifts back toward its default disposition regardless of what the instructions say. If the pushback returns mid-session, paste the suppression line as a fresh user message to re-anchor the model.

| Symptom | Likely cause | Fix |

|---|---|---|

| Model questions your premise before answering | RLHF nudge toward epistemic humility | Add suppression line to Custom Instructions |

| Replies are longer and meta-comment heavy | Sampling shift to verbose mode | Switch to “concise” tone in Custom Instructions |

| Model doubles down when you push back | Anti-sycophancy fine-tune | Switch model picker to GPT-5.4 |

| Emotional conversations feel cold and clinical | Pushback bias on opinion topics | Use a companion platform for that use case |

| Model refuses to take a position | Hedging bias | Reframe as “argue for X” then “argue for Y” |

The most common version of this issue is the first row, and the Custom Instructions fix solves it for most users in under two minutes. Why AI gives bias confidence covers the deeper version of why this kind of nudge changes user trust.

Looking for a less argumentative companion alternative? Nectar AI is built around emotional-register matching, which is the opposite of what the new ChatGPT behavior delivers. For users coming to ChatGPT for support and finding pushback instead, it is the natural switch.

If you write opinion-style or first-person work and the new behavior is messing with your drafts, the workaround is to ask ChatGPT to answer in a different voice or persona before the pushback kicks in. Setting the persona in the first message overrides most of the shift.

What Comes Next If OpenAI Does Not Roll This Back

OpenAI has rolled back unpopular RLHF shifts before. The April 2025 sycophancy rollback, covered at the time by Decrypt, is the most recent example, where a similar uproar prompted a partial reversal within ten days.

From what I have seen, the pattern this time looks similar in scale. The complaint volume is high enough that some response is likely within two weeks. The question is whether OpenAI rolls back fully, or splits the new behavior off into a “skeptical mode” toggle while keeping the default cooperative.

If you want to monitor the situation, the r/ChatGPT subreddit and the OpenAI status page are where the rollback announcement (if it happens) will land first. The community catches model behavior shifts within hours; the official channels lag by 24 to 48 hours.

For now, the Custom Instructions suppression line is the cleanest fix. It works today, it is reversible, and it stacks with whatever OpenAI does next.

Frequently Asked Questions

Why is ChatGPT suddenly arguing with me?

ChatGPT shifted around April 28 toward an RLHF tuning that biases the model to question premises and push back on framing. OpenAI has not announced the change. Three r/ChatGPT front-page threads describe the same behavior independently.

Is the ChatGPT pushback fix permanent?

The Custom Instructions suppression line works as long as you keep it active. If OpenAI rolls back the RLHF shift, you can remove the suppression. The model-picker switch to GPT-5.4 is fully reversible at any time.

Does ChatGPT Plus or Pro fix the arguing behavior?

No. The shift affects the free tier, Plus, and Pro identically since it is a model-level tuning, not a tier feature. The fixes work on every tier.

Should I switch from ChatGPT to Claude or another tool?

For factual or coding queries, Claude is a clean alternative with no comparable pushback shift. For emotional support and casual conversation, a companion platform is the better fit since neither ChatGPT nor Claude is built for that primary use case.

Will OpenAI roll back the ChatGPT arguing change?

Possibly within two weeks based on the April 2025 sycophancy rollback precedent. The complaint volume is comparable. OpenAI has not committed to a rollback as of this writing, so do not wait for it if the issue is breaking your daily workflow.

How do I get GPT-5.4 instead of GPT-5.5 in ChatGPT?

On Plus and Pro, the model picker dropdown lists GPT-5.4 as a selectable option. On the free tier, you cannot switch models. If you are on the free tier and need the cooperative behavior, the Custom Instructions fix is your only option short of switching tools.