My Take: AI bias was never the mysterious emergent thing the discourse made it sound like. It is a measurable function of training-data composition, and the new pre-1931 vintage LM “talkie” just produced the cleanest possible demonstration of that. The framing that bias is hard to understand, hard to measure, and hard to fix has cost the field two years of misdirection. We can finally do the work.

The AI bias conversation has been stuck for two years on a single load-bearing assumption. The assumption is that bias in large models is an emergent property of scale, opaque to inspection, and irreducible to anything as boring as the training data composition.

Every major framing of the problem leans on some version of this. AI bias is described as “hidden”, “lurking”, “complex”, “multifaceted”, “emergent”, “non-deterministic”, and other words that are functional synonyms for “we cannot easily explain it.”

That framing fell apart on April 28, 2026, the day Alec Radford and his collaborators released the pre-1931 talkie release. The model produces output that reflects 1925 racial and gender assumptions, because that is what its training corpus contains.

The team paired it with an identical modern twin trained on FineWeb. The bias gap between the two is not an emergent property of model architecture, it is a measurable function of which corpus the model saw.

We have been told for two years that this question was hard. It turns out it was just unmeasured.

The Mainstream View And Why It Falls Short

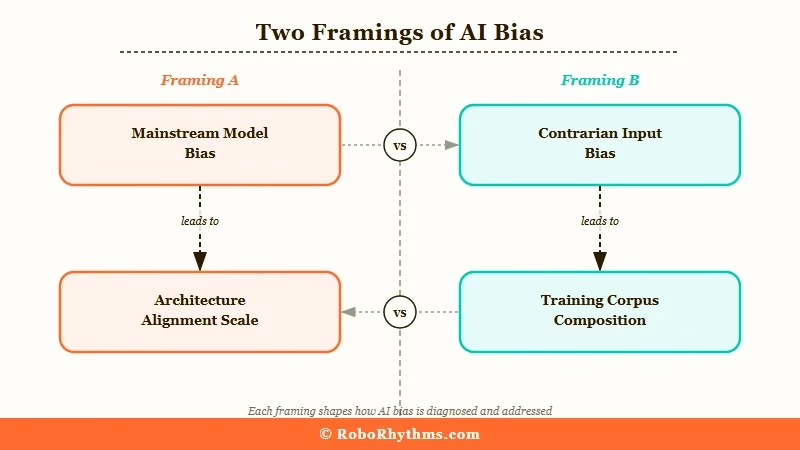

The mainstream view treats AI bias as a complex emergent problem requiring ongoing interpretive research.

Stanford’s AI Index report frames it as a multifaceted issue that intersects with model architecture, training data, deployment context, and downstream use cases.

NIST’s AI Risk Management Framework treats bias as a category of model risk that emerges from training and is mitigated through governance practices.

Major tech publications routinely describe AI bias as “lurking”, “embedded”, or “hidden in the model” in ways that suggest the bias is an architectural property rather than a data property.

That framing made sense in 2023, when nobody had a way to disentangle architecture from data without spending a million dollars to retrain a foundation model from scratch.

The constraint forced the field to treat training data as a fixed variable and look for bias signatures in the architecture, the loss function, the alignment layer, and other components that were easier to vary in research.

The problem is that the framing leaked from “we cannot easily measure this” into “this is genuinely unknowable.” A two-year drumbeat of “AI bias is mysterious” became its own conventional wisdom, and that wisdom underwrote a policy and academic agenda focused on interpretability and safety governance rather than on the boring work of curating training data better.

The way I see it, that move was understandable when training data was practically untouchable, but it has been wrong for at least the last 18 months. The framing kept going because nobody had the energy to update it. talkie is the update.

What’s Actually Happening

AI bias is overwhelmingly a function of training data composition, and the modern twin paradigm makes that measurable for the first time.

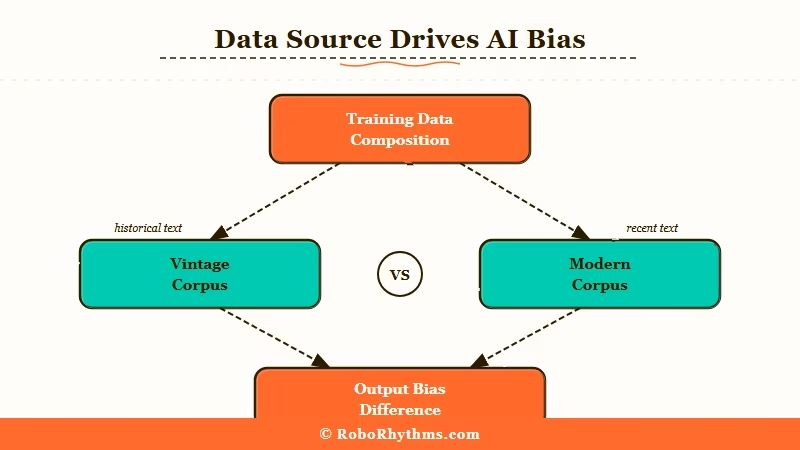

The talkie research design is what cracks this open. Same architecture, same parameter count, same training procedure, two different corpora. Whatever differs in the output between the two models is, by construction, attributable to the data difference and nothing else.

What I find most striking about the talkie result is how cleanly it isolates the variable. The vintage model produces racial and gender output that mirrors 1925 print media, while the modern twin trained on FineWeb does not.

The difference is not because the modern architecture is “less biased” or because the alignment training “fixed” something. The difference is because FineWeb was filtered, scraped, and weighted to look like 2024 web English rather than 1925 newspaper English.

In my read of the result, this lands harder than anyone expected because it disposes of an entire class of theories at once. There is no need to invoke emergent properties, attention head behaviour, or layer-specific bias accumulation.

The training corpus contains period-typical assumptions, the model surfaces those assumptions, the output reflects them. End of mechanism.

What I would argue is the deeper implication is that the modern alignment work everyone has been celebrating is not eliminating bias as a property of large models. It is post-hoc filtering of the bias the training corpus introduced.

Those are not the same thing. The first claim says modern LLMs are intrinsically less biased. The second claim says modern LLMs are exactly as biased as their training data, but with a moderation layer on top that catches the worst surfacing.

The second claim is what talkie demonstrates. The first claim was what we wanted to believe. The way I see it, the field has been writing the first-claim story while doing the second-claim work, and the gap between rhetoric and reality is going to get smaller fast now that the measurement exists.

The Part Nobody Wants to Admit

The uncomfortable implication is that AI bias is a curation problem, not a research problem. The field has spent two years building interpretability tools, mechanistic safety frameworks, and red-teaming protocols on the premise that bias is something we have to discover.

talkie shows that bias is something we put in. The gap between those two claims is not academic, it is operational.

What I find inconvenient about this is what it implies for the people who built careers on the “bias is mysterious” framing. The interpretability researchers, the safety governance consultants, the AI ethics committees that meet quarterly to discuss “emergent risks”, the policy advisors who built recommendations on the assumption that bias is intrinsic to scale.

None of those positions become useless overnight, but they all have to update their core framing, and updates of that depth are slow and reputation-fraught.

The way I see this playing out, the institutions with the most invested in the old framing will be the slowest to acknowledge the shift. Stanford’s AI Index 2026 update is unlikely to drop the multifaceted-emergent-issue framing in its first cycle after talkie.

It is more likely to add a small section on “data-driven bias measurement” while keeping the broader framing intact. That is how academic literature absorbs paradigm shocks, slowly and with footnotes.

What I would predict is happening in private but not yet in public is that the labs are starting to internally measure their own training-data composition more aggressively. The “modern twin” methodology is reproducible: anyone can train a small model on a curated subset of their corpus and compare it to a model trained on the full corpus.

That gives a per-feature attribution of how much of the model’s output behaviour comes from each data slice. The labs that run that internally first are going to come out ahead in the next regulatory wave.

Hot Take

The whole “AI alignment” industry is going to look, in retrospect, like a five-year detour the field took because nobody had the courage to say the bias problem was a curation problem. I would bet that within 18 months, the most influential AI bias paper of 2026 will be a methodology paper on training-data attribution rather than yet another interpretability monograph.

The fix for AI bias is not better interpretability research, it is better training data, and we have known that since before “alignment” became a billion-dollar word.