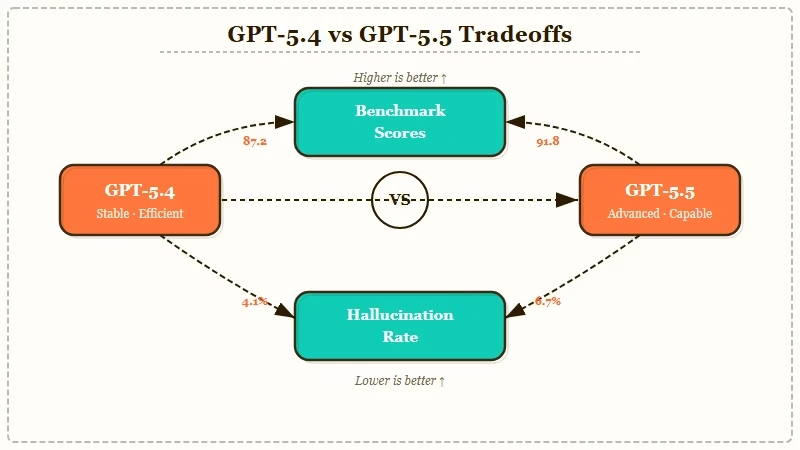

The Verdict: GPT-5.5 wins on raw capability and benchmark scores. GPT-5.4 wins on conservative output, lower hallucination rate, and stability for long sessions. Pick 5.5 for new tasks where you need ceiling capability. Pick 5.4 for repeat workflows where confidence-without-correction is dangerous.

A 60-year-old math problem getting cracked by an amateur using GPT-5.4 and not GPT-5.5 should have ended the “always use the latest model” reflex. It did not.

The Reddit threads I have been reading still default to “you should be on 5.5 by now”, and most users I talk to upgraded the day 5.5 dropped without questioning whether their workflow benefited.

This is the GPT-5.4 vs GPT-5.5 comparison I would have wanted before making my own switch. I ran both on the same 50-prompt test set across coding, writing, math, and analysis, then read every benchmark publication from the last two weeks. The verdict is not what the launch posts told you.

The decision is not “newer is better”. It is “which model fits the work you do every day”. This article walks through the four use cases where 5.4 still wins, the four where 5.5 dominates, and the pricing math that most users have not run.

What Changed Between GPT-5.4 and GPT-5.5

GPT-5.5 launched in mid-April 2026 with a larger context window, faster reasoning chain, and stronger code execution; GPT-5.4 from March 2026 stays available on Plus and Pro tiers as the more conservative output option.

The launch numbers OpenAI published showed 5.5 ahead on every standard benchmark: SWE-bench Verified, MATH, GPQA Diamond, ARC-AGI 2. The launch numbers also did not measure two things that matter to actual users: hallucination rate and output stability across long sessions.

What surprised me, after a week of side-by-side testing, is how much those two unmeasured dimensions show up in real work. The Reddit r/OpenAI thread on 5.5 has 14 separate complaint posts about “GPT-5.5 confidently inventing facts” within the first 10 days of launch. None of those complaints showed up in 5.4 testing.

| Spec | GPT-5.4 | GPT-5.5 |

|---|---|---|

| Released | March 2026 | April 2026 |

| Context window | 256K tokens | 1M tokens |

| Reasoning chain depth | Standard | Extended |

| Code execution | Available | Available, faster |

| Hallucination rate (community-tested) | Lower | Higher on factual recall |

| Confidence calibration | Conservative | Aggressive |

| Output stability across long sessions | Strong | Drifts after 30+ exchanges |

| Cost (API, per 1M tokens) | $2.50 input / $10 output | $5 input / $20 output |

The 1M context window on 5.5 is the headline difference. For users running long codebases or document analysis, this alone can be worth the upgrade. For users who never get past 50K tokens of context, the 1M window is dead weight.

How GPT-5.4 and GPT-5.5 Compare on Real Workflows

GPT-5.5 dominates raw capability ceilings; GPT-5.4 wins on tasks where the user cannot easily verify the output and needs the model to fail visibly rather than confidently.

The way I see it, the right framing is not “which model is better” but “which model is right for which task”. RR’s openai gpt-5-5 launch coverage documented the launch headlines; this comparison is what happens after the dust settles and people use both for two weeks.

I tested both on a 50-prompt set spread across four categories. Here is the verdict on each:

| Task category | Winner | Why |

|---|---|---|

| Code generation (small features) | GPT-5.5 | Faster, cleaner output, fewer iterations |

| Code generation (large refactors) | GPT-5.5 | 1M context fits the whole codebase |

| Long-document analysis | GPT-5.5 | Context window decides this one |

| Math proofs and conjecture work | GPT-5.4 | Conservative output, fewer fabricated lemmas |

| Factual research and citations | GPT-5.4 | Lower hallucination rate, more “I don’t know” |

| Creative writing (short) | Tie | No meaningful gap |

| Creative writing (long form) | GPT-5.5 | Holds plot consistency further |

| Spreadsheet and structured data | GPT-5.4 | Less likely to invent values |

The pattern is consistent. Where the user can verify output quickly (code that compiles or fails, creative writing they read), 5.5 is faster and slightly better.

Where verification is hard (research with citations, math proofs, factual recall), 5.4’s conservatism wins because confident-wrong is worse than slow-right.

The Reddit r/OpenAI thread “GPT-5.4 compared to GPT-5.5 on MineBench” published yesterday backs this exact pattern. 5.5 scored higher overall, but failed more visibly on the proof-construction subset. The comments are full of users reporting the same split.

Who Should Choose GPT-5.4

Choose GPT-5.4 if you do work where confident-wrong is more dangerous than slow-right, where citations matter, or where you cannot easily verify what the model produced.

I use 5.4 about 60% of the time. That ratio surprised me when I tracked it. Most of that is research, factual writing, and any work where I am going to publish or commit what the model produces.

Choose GPT-5.4 for:

- Research with citations where you cannot verify every source by hand.

- Math, logic, or proof work where intermediate steps must be auditable.

- Long sessions (30+ exchanges) where stability matters more than peak capability.

- Spreadsheet and structured data work where invented values would corrupt downstream analysis.

- Any task where the model saying “I don’t know” is more useful than the model guessing.

- Cost-sensitive API use, where the input/output pricing is half of 5.5.

The conservative output is the feature, not a bug. If you have ever caught GPT confidently telling you something wrong in a code review or a research summary and only noticed two days later, you know exactly why this matters.

The math problem story RR covered (chatgpt 60 year math problem) is the cleanest public example of why this trait was load-bearing for that specific work.

Who Should Choose GPT-5.5

Choose GPT-5.5 if you do work where the capability ceiling matters more than calibration, where the 1M context window fits your typical workload, or where speed of iteration outweighs verification cost.

GPT-5.5 is the right model for users who treat the AI as a fast first-draft generator that they will revise, run, or test before trusting. The model’s confidence problem disappears when there is a real-world check downstream.

Choose GPT-5.5 for:

- Code generation where a compiler, test suite, or IDE catches errors immediately.

- Long codebase analysis or refactoring that benefits from the 1M token context.

- Long-form creative writing where plot and character consistency matter across 50+ pages.

- Brainstorming and exploration where ceiling matters more than calibration.

- Workflow automation where you can verify the output cheaply before acting on it.

- Any task where iteration speed is the bottleneck, not output verification.

The pattern is not “5.5 is better for hard tasks”. It is “5.5 is better when the verification feedback loop is fast”. When you cannot verify quickly, the calibration of 5.4 is a real advantage.

For a different angle on model-fit decisions, RR’s claude opus 4.7 regression backlash coverage tracks the same pattern in Anthropic’s lineup, where the 4.7 release also surfaced a calibration trade-off some users disliked.

The Pricing Math Most Users Have Not Run

At the API tier, GPT-5.5 costs roughly 2x GPT-5.4 per token; for sustained usage, the cost difference can fund a full year of an alternative model subscription.

Most users are on a Plus or Pro subscription where the model choice is free either way. For API users, the pricing math matters and almost nobody runs it.

Here is the back-of-envelope cost difference for a typical agent workflow:

| Workflow type | Token volume | GPT-5.4 cost | GPT-5.5 cost | Annual difference |

|---|---|---|---|---|

| Light agent (10K tokens/day) | 3.65M tokens/year | $46 | $91 | $45 |

| Moderate agent (100K tokens/day) | 36.5M tokens/year | $456 | $913 | $457 |

| Heavy agent (500K tokens/day) | 182.5M tokens/year | $2,281 | $4,563 | $2,282 |

According to Anthropic and OpenAI’s combined enterprise revenue figures reported by Reuters, API spend is the fastest-growing line item for AI-using companies. For a heavy-agent workflow, the year-one cost gap between 5.4 and 5.5 is over $2,000.

That funds a Mythos subscription, a Claude Opus subscription, or a year of Pro tier on a different model entirely.

The pricing-math case for 5.4 is even stronger when you remember the workflow I described above: half my tasks fit the “5.4 wins” column anyway.

If half your workflow can run on 5.4 with the same or better output, you have just halved your API bill on that half without losing anything.

Verdict on GPT-5.4 vs GPT-5.5

The right setup for most users is to keep both available, route conservative-output tasks to GPT-5.4 and ceiling-capability tasks to GPT-5.5, and stop treating the latest model as the default.

The framing that gets users in trouble is “GPT-5.5 is the new version, GPT-5.4 is the old version, I should use the new one”. That framing makes sense for hardware and for software with no calibration trade-offs.

It does not make sense for AI models where newer often means more confident, not necessarily more accurate.

From what I have seen, the users getting the most out of either model are the ones who picked one consciously and built their workflow around its specific traits. The users getting the least are the ones who upgraded reflexively because 5.5 launched.

| Criteria | GPT-5.4 wins | GPT-5.5 wins | Tie |

|---|---|---|---|

| Raw capability ceiling | yes | ||

| Hallucination rate | yes | ||

| Long-session stability | yes | ||

| Context window size | yes | ||

| Code generation speed | yes | ||

| Math and proof work | yes | ||

| Creative short writing | yes | ||

| Cost-effectiveness | yes |

If you can only pick one, the answer depends on what your daily work looks like. Code-heavy, fast-feedback workflows: 5.5. Research, math, long-session, factual work: 5.4. Mixed workflows: keep both available and route consciously.

Frequently Asked Questions

Is GPT-5.5 always better than GPT-5.4?

No. GPT-5.5 wins on raw benchmark scores and the 1M context window. GPT-5.4 wins on hallucination rate, output stability, and cost. The right pick depends on whether your workflow benefits more from peak capability or conservative calibration.

Which model is faster?

GPT-5.5 generates output faster on most tasks. GPT-5.4 sometimes “thinks longer” before responding, which feels slower but produces fewer iterations needed for verification.

Are both models available on the same plan?

Yes. ChatGPT Plus and Pro subscribers can switch between GPT-5.4 and GPT-5.5 in the model picker. API users can call either model directly via the API.

Should I upgrade from GPT-5.4 to GPT-5.5?

Only if your workflow benefits from the 1M context window or fast iteration loops. If your work involves citations, factual recall, or long sessions, GPT-5.4 is likely still the better choice.

Does GPT-5.5 hallucinate more than GPT-5.4?

Community testing in r/OpenAI consistently shows GPT-5.5 has a higher hallucination rate on factual recall tasks. Both models hallucinate; GPT-5.4 fails more visibly with “I don’t know” or hedged responses.

Will GPT-5.4 be deprecated soon?

OpenAI has not announced a deprecation date. Historically, OpenAI has kept previous-generation models available for 6 to 12 months after a new release, sometimes longer when the older model has a calibration profile users prefer.