What Happened: An amateur mathematician used GPT-5.4, not the latest model, to chip away at a 60-year-old open problem in dynamical systems and produced a proof that working academics had not landed in six decades. The model did not solve it alone. The user drove the proof, GPT surfaced lemmas, and the back-and-forth cracked it.

Discussion is still climbing on r/ChatGPT and r/singularity 17 hours after Scientific American published the story, with over 3,200 combined upvotes and 290 comments at the time of writing. So I want to get to it while the conversation is hot, because most of the takes I am reading get the lesson backwards.

The story matters, but not for the reason most people think. From what I have seen across the threads, half the comments read this as a “ChatGPT replaced mathematicians” moment and the other half read it as “the human did all the work, GPT typed”. Both miss the real data point.

What this is, plainly, is the clearest public example so far of a non-expert using a current general-purpose model to push a real piece of work past where domain experts have sat for sixty years. Not a benchmark, not a coding agent demo, a real published-grade result. That should change how you think about your own tool stack, even if you never touch a math problem again.

How the Proof Was Built

An amateur mathematician using GPT-5.4 in iterative “vibe-math” sessions produced a proof of a 60-year-old open problem in dynamical systems and ergodic theory, with Scientific American publishing the account on April 26, 2026.

The Scientific American piece went live April 26, 2026. The author detailed how a self-taught enthusiast spent weeks running back-and-forth sessions with GPT-5.4. Not GPT-5.5, the model RR covered at launch. The slightly older one.

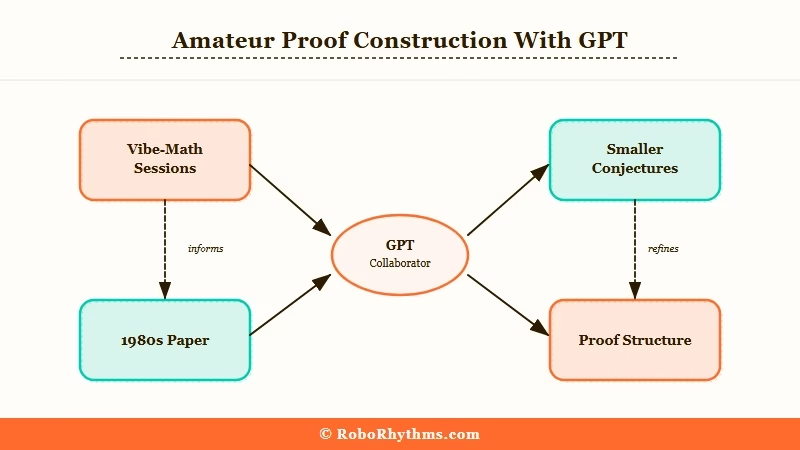

The pattern was not “ask ChatGPT to solve this”. The user broke the problem into smaller conjectures, asked the model to surface known lemmas and adjacent results, then chained the model’s outputs into a proof structure.

When GPT confidently produced a wrong step, the user caught it and pushed back. When the model surfaced an obscure 1980s paper that turned out to be load-bearing, the user followed the citation by hand.

Three numbers from the source piece worth keeping in mind:

- The problem had been open since the 1960s.

- The proof took weeks of focused sessions, not minutes.

- The user had no formal advanced math training.

The Reddit threads add color the article does not. The top comment on the r/singularity post flags that GPT-5.4 was used because it produced more conservative, verifiable outputs than 5.5 in the user’s testing. That detail did not make the headlines, and it is the most useful detail in the whole story.

Why This Is a Bigger Deal Than It Sounds

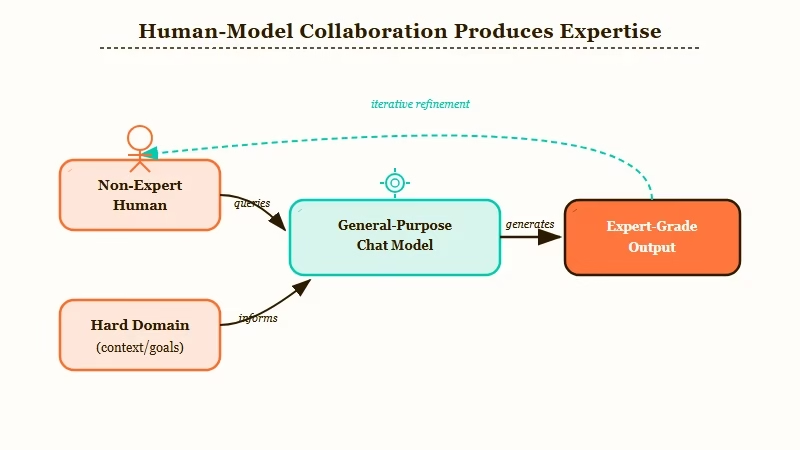

This is the first widely reported case where a non-expert used a current general-purpose chat model to produce expert-grade output in a hard domain, with the human doing direction and the model doing surface and synthesis.

Most “AI did X” stories collapse on inspection. Either the AI did a tiny thing and got hyped, or the human did the work and the AI got credit. This one survives the inspection because Scientific American walked through the proof structure and named which steps the model contributed, which the user contributed, and which came from cited prior work.

The way I see it, this is a centaur result. The user could not have done it alone in a reasonable timeframe. The model could not have done it alone at all.

The combination did, and the combination is what most readers should be paying attention to. RR has been tracking the same pattern in adjacent niches with our AI tools for mathematics coverage.

What surprised me is the model choice. GPT-5.4 is not the frontier. Anthropic’s Mythos and OpenAI’s GPT-5.5 are both more capable on standard benchmarks.

The user picked 5.4 because it was the right tool for the job, not because it was the latest. Choosing the model that fits the workflow beats chasing the model with the highest leaderboard score, every time.

The other thing I keep coming back to is how unflashy the technique is. No agentic loop, no multi-tool stack, no fine-tune, no RAG pipeline.

A long chat window, careful prompting, a human checking every step. The boring answer is the one that worked.

| Element | What the human did | What GPT-5.4 did |

|---|---|---|

| Problem framing | Decomposed the open problem into sub-conjectures | Suggested 4 of the decomposition paths from prior literature |

| Lemma surfacing | Asked targeted questions about known results | Surfaced lemmas including one from a 1980s paper that proved load-bearing |

| Proof drafting | Held the structure, caught wrong steps | Generated candidate proof sketches the user then audited |

| Citation chasing | Followed every cited paper by hand | Provided the candidate citations |

| Final write-up | Wrote the formal proof | Did not produce the final document |

What This Means for You

The takeaway for RR readers is technique-first, not model-first. The marginal-value gain is in how you direct the tool, not which model you point at the problem.

I have seen a thousand “GPT can do X” claims this year, and almost all of them age badly within a month. This one is different because the lesson generalizes. If a non-expert can push a hard math problem forward with patience and a chat window, then the gap between “I have access to the tools” and “I get real work out of the tools” is technique, not subscription tier.

Three concrete shifts I would make based on this:

- Stop chasing the latest model on principle. GPT-5.4 worked because it was conservative. If you do work where confidence is more dangerous than capability, an older model can be the better pick. The same logic applies to Claude Opus 4.6 versus 4.7, or to Gemini Pro versus the experimental tier. RR has covered the Claude Opus 4.7 regression complaints and the same pattern shows up there.

- Run longer sessions on harder problems. Most tool users I see, including me when I am being lazy, treat ChatGPT as a 3-prompt utility. The math result took weeks of focused sessions. The real capability ceiling is much higher than 3-prompt usage reveals.

- Audit every confident claim. The user caught wrong steps because they expected wrong steps. If you assume the model is right, you cannot use it for work that matters. If you assume it is wrong by default, you can.

Vague approach: “Hey GPT, solve this open problem in dynamical systems.” Specific approach: “I am working on conjecture X. List five known results from ergodic theory between 1960 and 2000 that touch this conjecture. For each, give me the citation and one sentence on why it might apply.”

The second prompt produced citable lemmas. The first one produced filler. That gap is the entire story.

Worth flagging: the user almost surely used a Plus or Pro subscription tier for the long context window and message limits the workflow needed. Free-tier ChatGPT would not have sustained the multi-week back-and-forth.

What Comes Next

Expect a wave of “GPT-5.4 helped me do X” stories in the next three to six months as more domain enthusiasts try the same iterative approach.

According to Stanford’s AI Index Report, the share of US adults using generative AI for work tasks crossed 39% in 2024 and has continued climbing. Most of that usage is shallow, three-prompt utility work.

The math story is the first widely circulated example of deep, sustained usage producing a result that the casual-prompt approach cannot reach.

What I expect to see, in order: more amateur science results, more law and finance work product surfacing similar patterns, a rebound of interest in older models for specific use cases, and a growing gap between “users who run 3-prompt sessions” and “users who run 30-prompt sessions”. The second group is going to start producing things the first group cannot, even on the same model.

For RR specifically, this is the kind of story that does not change tomorrow’s tool choice but does change how I think about the value of model access. If you have been paying $20 a month and only running short sessions, the leverage gap is bigger than the price tag suggests.

The full Scientific American piece is worth reading in source rather than summary, especially the parts where the author walks through which prompts produced wrong outputs. That part is the most useful for your own technique.