My Take: Heavy ChatGPT use is not making writers lazy in the way critics describe. It is doing something subtler and worse. It is removing the friction that used to produce voice, and the productivity story is hiding the cost.

I sat down to write a 600-word section on Tuesday and could not start. Not blocked in the usual way, where the words are jammed up and I have to find a way around them. Blocked in a way I had not felt in years.

What I noticed was that I could not write a bad first draft. Six months of running every paragraph through ChatGPT to clean up the grammar, restructure the flow, or just “make it read better” had gradually trained me out of the part of writing that produces voice in the first place.

The mainstream framing of the AI-and-writing debate has settled into two camps. One says AI is a productivity tool that frees up human creativity, the other says AI is making people lazy.

I think both camps are missing the real mechanism, and I have been thinking about why for the last week. This piece is what I wish someone had written for me four months ago, when the productivity gains looked like a free trade and the cost was not legible yet.

The Mainstream View And Why It Falls Short

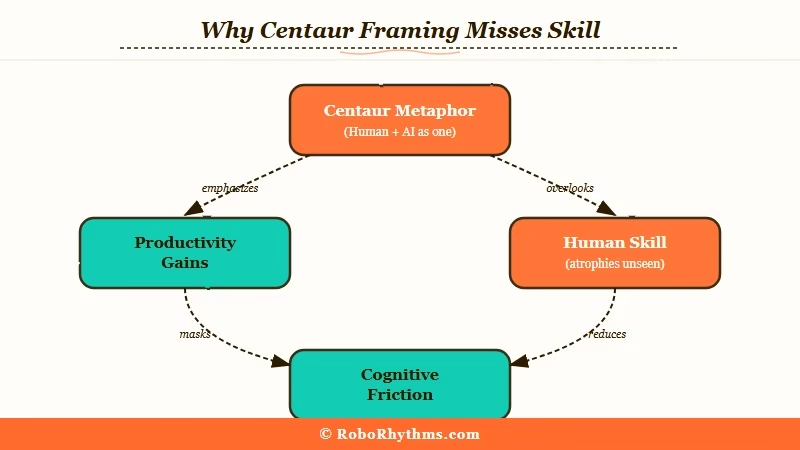

The mainstream view, the one most loudly argued by Ethan Mollick’s One Useful Thing, is that AI is a “co-intelligence” that augments human cognition without replacing it. The metaphor is the centaur: human plus model, working together, each doing what they are best at.

The argument is that anyone who refuses to learn this collaboration is leaving productivity on the table.

I want to be fair to this view. It is not wrong.

People who use AI well in their workflows produce more output, faster, with comparable quality. The productivity studies back this up across knowledge work, customer service, and creative tasks.

The framing falls short because it stops at productivity and never asks what the human side of the centaur loses by not doing the parts the AI now does. It treats writing skill as a fixed capability you bring to the partnership, not a muscle that requires use to maintain.

That is the gap I want to write into. Not “AI is bad” or “AI makes you lazy.” The real mechanism is more boring, and a lot harder to argue with once you have noticed it in your own work.

The centaur framing is what most heavy AI users adopted in 2024 and 2025, including me. It is the framing that lets you feel productive without examining the trade. What I have come to think, after six months of trying to live inside it, is that the centaur lasts about as long as the human half remembers how to ride solo.

What’s Actually Happening

What I have seen, in my own writing and in the writing of every heavy-AI-using friend I have asked, is a specific kind of skill atrophy. Not the cliche of “people cannot spell anymore.” Something narrower and more useful to talk about.

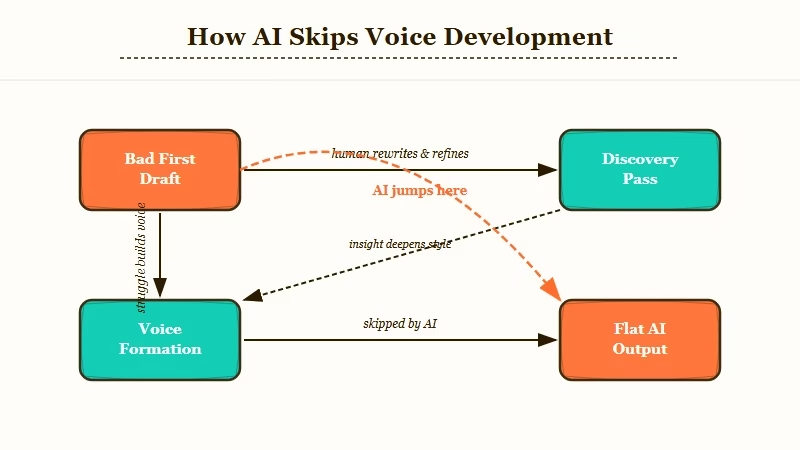

The skill that goes first is the willingness to write a bad first draft. The brain that has been outsourcing the cleanup work for six months loses confidence that it can produce something messy and incomplete and still find its way to good prose by editing.

You sit down to draft, your inner editor is louder than your inner writer, and the AI is right there, ready to bypass the discomfort by giving you a polished version of an idea you have not finished thinking yet.

That bypass is the entire problem. The bad first draft is where most writers find their real voice.

It is messy because the writer is thinking in real time. The cleanup pass is where you discover what you really meant.

If the cleanup pass is now the AI’s job, the discovery pass is also the AI’s job. You are a step removed from your own thinking, and the prose comes out sounding like the average prose the model was trained on, because that is what the model produces when you hand it a sketch.

From what I have tested across a hundred drafts in the last two months, my AI-cleaned drafts are all marginally better than my raw drafts on grammar and pacing, and consistently flatter on voice and surprise.

This is not a productivity argument. The output is fine. The work gets done.

What is missing is the part of writing that came from struggling with a paragraph long enough to figure out what the paragraph was about. That struggle was the unit of writing skill.

AI removes the struggle and ships the output, and the writer’s skill atrophies in the same way muscles atrophy in zero gravity.

I find this is most visible in the second draft, not the first. The first AI-assisted draft reads fine. The second one, where I am trying to write something different and I no longer have the muscle to produce a usable bad first draft to revise, is where the gap shows up.

The same pattern is visible in adjacent skills. The GPT 5.5 launch added voice cloning that lets you draft in your own style, which is being marketed as a solution to the voice-flattening problem.

From what I have tested so far, it preserves surface mannerisms and still flattens the underlying decisions about what to say. The voice-clone is a copy of how you sound when the AI is not flattening you, applied to writing the AI is flattening anyway. The marketing is reassuring, the result is not.

The Part Nobody Wants to Admit

The part nobody wants to admit, and the reason this piece is harder to write than it should be, is that the productivity gains are real. The cost I am describing does not show up on any spreadsheet.

It shows up in the gap between what you can produce with AI and what you can produce when AI is not around, and that gap widens slowly enough that you do not notice until you sit down to write a 600-word section without a model and cannot start.

The other thing nobody wants to admit is that this is not unique to writing. I have heard the same complaint from working coders about Claude Code, from designers about Figma’s AI tools, from analysts about ChatGPT’s data interpretation.

Across knowledge work, the people who lean hardest on AI are the ones reporting the largest erosion of the underlying skill, on the timescales of about six to nine months.

What I see in my own work is that the erosion is reversible. After two weeks of writing without AI, the bad first drafts come back.

The voice comes back. The struggle comes back too, which is the part of the trade most users will not pay.

That is the uncomfortable conclusion. The skills atrophy when you stop using them, and the struggle that maintains them is exactly what AI is best at removing. There is no version of “use AI as a co-intelligence” that does not involve choosing which struggles to keep doing yourself.

The productivity gains are real and the skill atrophy is real and they are happening at the same time. Most working writers, including me until last week, are paying the second cost without naming it.

The mainstream framing is still about productivity because nobody who builds these tools wants to put the trade on the box. I would not want to either, if I sold the tools.

I have been writing a lot more about prompt design lately as a way to fight this. The prompt engineering for ChatGPT piece covers the technical side.

The real answer is more about which prompts you do not run, not which prompts you run better. Cutting back is harder than getting better at the cutback, and the writers I respect are mostly choosing the harder path.

The same dynamic is starting to surface in adjacent niches. I argued in why companion subscriptions will collapse that the productivity-and-trade pattern is going to play out in companion AI on a similar timeline, just for different reasons. The trade in writing is voice. The trade there is depth. Both are invisible on the spreadsheet that justifies the spend.

Hot Take

ChatGPT is not making writers lazy. It is making writers forget that the friction was the skill, and the fix is to use AI less on purpose so the bad first draft stays a thing you can still produce.