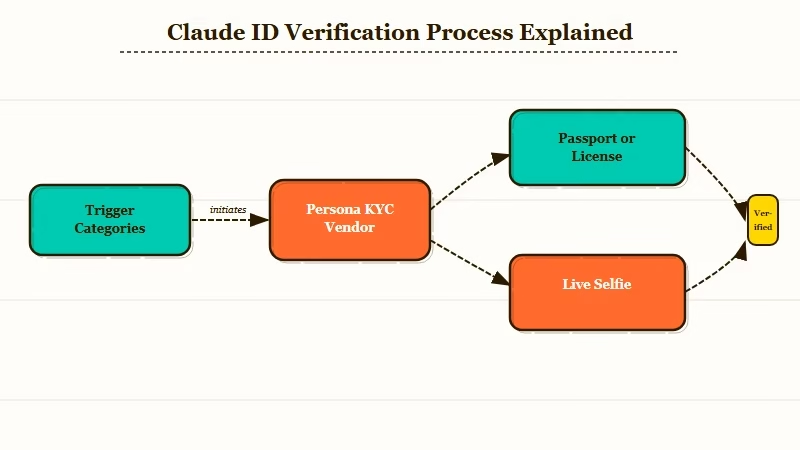

What Happened: Anthropic quietly rolled out mandatory ID verification for Claude in April 2026, asking certain users for a government passport and a live selfie. The check is run by Persona, the same KYC vendor used by fintechs. Four trigger categories decide who gets the prompt.

Anthropic Claude ID verification went live for a subset of users on April 14, 2026, and the internet found out two days later. If you switched to Claude because you did not trust the surveillance posture at other labs, the irony is hard to miss. You are now being asked for a passport.

Anthropic has not made this a blanket rule. It is targeted. But the categories it targets are broad enough that a large chunk of the user base could see the Persona verification modal on their next login, and a meaningful slice of that chunk will refuse to complete it.

I have spent the last day reading through the help center page, the coverage from The Register, and the Reddit and X threads. The picture that comes out of that is more nuanced than the headlines suggest, but also worse in one very specific way.

What Actually Happened With Anthropic’s Claude ID Verification

Anthropic Claude ID verification is a Persona-backed KYC flow that asks selected users for a live selfie and a government ID before they can keep using Claude.

The help center page went live on April 14, 2026, and Anthropic confirmed the rollout publicly on April 16.

The accepted documents are an undamaged passport, a driver’s license, or a national identity card. The selfie is not a static upload. It has to be taken live on camera inside the verification widget, the same way Coinbase and Revolut handle onboarding.

Persona, not Anthropic, stores the document data. Anthropic gets a pass-or-fail signal. Persona states the data is not used to train models and is only handed over under a valid legal process.

The four trigger categories are where this gets interesting.

| Trigger | Who it targets | What happens |

|---|---|---|

| Usage policy violations | Repeat offenders on cyber or restricted-content rules | Account locked until verified |

| Unsupported locations | Logins from mainland China, Russia, North Korea | Account locked until verified |

| Terms of service violations | General legal agreement breaches | Account locked until verified |

| Under-18 users | Minors flagged by behavior signals | Account locked until verified |

The unsupported-locations bucket is the one carrying the most weight. It is the stated reason the system exists. Anthropic wants to make it harder for users in countries the US government has sanctioned from accessing Claude through shared accounts or VPN.

What the help center does not spell out is the false-positive rate. A Western user on a consumer VPN, a traveler using hotel Wi-Fi in Hong Kong, or a researcher using a Tor exit node can all trip the geographic flag. Anthropic has not published data on how many of the prompts land on users who did nothing wrong.

Why Claude’s KYC Rollout Is a Bigger Deal Than It Looks

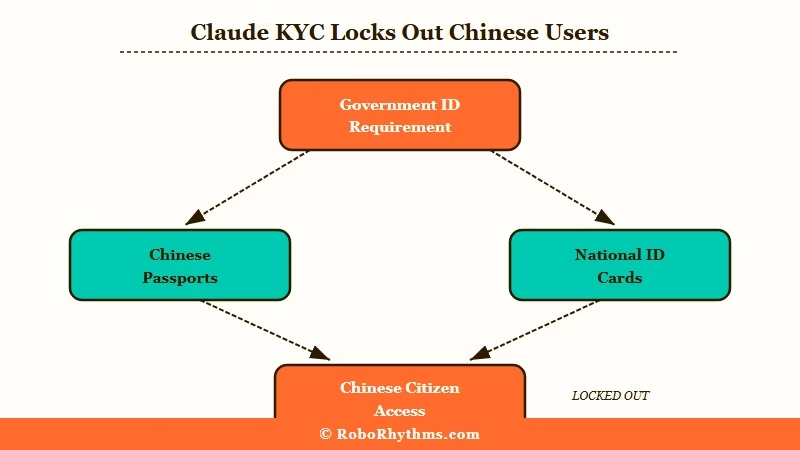

The Claude KYC rollout is significant because it makes Claude the first major consumer AI chatbot to require government ID, and because the accepted-document list excludes most Chinese nationals. That second part is where the geopolitics start biting.

The accepted ID list recognizes only passports from China, not Chinese national ID cards. Most Chinese citizens do not hold a passport. They do not need one for domestic life and the application process is expensive and slow.

Functionally, this means Chinese users are excluded by document availability, not by nationality. Anthropic avoided a clean ban and got the same effect. Whether that was the intent or a side effect of the verification partner’s default document list, the outcome is identical.

The second layer is privacy posture. A big share of Claude’s organic growth came from users actively switching from ChatGPT because they wanted a lab that talked about safety and restraint. I have seen the ChatGPT-to-Claude movement argue this exact point in Reddit threads for over a year.

Asking those users for a passport and a selfie is a brand move, not just an operations move. It tells them the privacy-first framing was a default setting, not a principle, and the default changed the moment enforcement became easier with a third-party KYC partner.

The third layer is the pattern this fits into. In the last six months we have seen Character AI face scans, Janitor AI ID verification, and the AI companion regulation wave. Claude is not an AI companion platform, but the tooling is converging.

What the Claude Government ID Requirement Means for You

The Claude government ID requirement applies to you if you fall into one of the four trigger buckets, and the practical answer is that most users will not see the prompt, but a few categories absolutely will. It is worth knowing which side of that line you sit on before your next login.

From what I have seen across the Reddit threads and my own account, these are the user types most likely to get flagged.

- Users logging in from a country on the restricted list, even briefly while traveling

- Users on consumer VPNs whose IP geolocates to a restricted region

- Users who received a policy warning in the last 90 days for a jailbreak attempt or a disallowed content prompt

- Users under 18 who created an account with an age that contradicts behavior signals

- Users whose account has been used across too many IP addresses in a short window, which is the shared-account heuristic

If none of these fit you, the odds of seeing the prompt are low for now. If any of them fit you, plan for it.

Here is the realistic decision you face when the modal shows up.

Example scenario: You open Claude on a Tuesday morning and the chat window loads a Persona verification frame instead of your last conversation. You have three options. One, complete the check with a passport and a live selfie, which gives Persona your document data and gets you back into Claude within about five minutes. Two, refuse, which locks the account until you comply. Three, cancel the subscription and move to a lab that does not require KYC, with the tradeoff that most US labs are converging on similar tooling.

The black market has already responded. The South China Morning Post reported a spike in verified-account resale on Chinese forums within 48 hours of the rollout.

Resellers are onboarding accounts with compliant documents and renting them out at a markup. Anthropic will have to decide whether it treats the resale market as a separate enforcement problem or as evidence the verification layer works as intended.

The privacy tradeoff is the part I would think hardest about. Persona is a reputable vendor, but no vendor is breach-proof.

Handing over a passport to keep using a chatbot is a new cost in the mental model of AI subscriptions, and it is the kind of cost that stacks. Every lab that adds KYC makes it more normal for the next one to add it too.

What Comes Next for Claude Users Refusing Verification

What comes next is probably the playbook spreading to OpenAI and Google within six months, with the unsupported-locations category expanding first and the policy-violations category expanding second. That is the direction the enforcement tooling points.

Two signals make me confident in that call. First, Anthropic’s recent enforcement pattern in the last quarter on the third-party access side has been aggressive, and KYC is the logical next step after API-key revocation. Second, the US executive branch spent Q1 2026 pushing labs toward sanctions compliance and this is the cleanest enforcement mechanism that has emerged.

Users refusing verification have three practical paths. They can move to a US lab that has not yet implemented KYC, which today means a short list that is likely to shrink. They can move to an open-weight model running locally, which trades capability for sovereignty. Or they can accept the verification, which is the path most users will take.

The thing I would watch for over the next 60 days is whether Anthropic expands the trigger list to include commercial API customers, which would shift the story from consumer privacy to enterprise procurement. If that happens, procurement teams at banks and hospitals will have to decide whether their compliance frameworks accept a third-party KYC step as part of an AI vendor onboarding. That is a much bigger conversation than a consumer chatbot rollout.

For now, the practical advice is to check your login region, check whether your account has any recent policy warnings, and decide in advance what you will do if the Persona modal shows up. It is easier to make that call on a quiet Tuesday than in the middle of a workflow that is already open.