What Happened: Moonshot AI released Kimi K2.6 on April 20, 2026, an open-source 1T-parameter mixture-of-experts model that posts 58.6 on SWE-Bench Pro and runs 300 sub-agents across 4,000 coordinated steps. It costs roughly a quarter of Claude Opus on the API.

Moonshot AI released Kimi K2.6 yesterday, and the numbers are doing something I did not expect to see this year.

The open-source camp finally posted a frontier-tier coding score with pricing that makes Claude Code and Cursor look expensive overnight.

This is not a small bump on K2.5. The architecture changed, the agentic depth jumped from a few dozen sub-agents to 300, and the API rate is sitting at $0.60 input and $2.50 to $3.00 output per million tokens.

For anyone running multi-step agents in production, those three things together are the real story.

What Actually Happened

Kimi K2.6 is Moonshot AI’s new open-source 1T-parameter MoE model, released April 20, 2026, that scores 58.6 on SWE-Bench Pro at roughly a quarter of Claude Opus’s API cost.

Moonshot AI shipped Kimi K2.6 on April 20, 2026 with full open weights, full API access, and a benchmark suite that puts it ahead of GPT-5 and roughly even with Claude Opus on production coding work.

The model is a 1 trillion parameter MoE with 32 billion active parameters per token and a 256K context window.

The headline benchmark numbers, pulled from Moonshot’s official release post and the Marktechpost analysis:

| Benchmark | Kimi K2.6 | Claude Opus 4.7 | GPT-5 |

|---|---|---|---|

| SWE-Bench Pro | 58.6 | 59.1 | 51.2 |

| HLE-Full | 54.0 | 56.3 | 49.8 |

| BrowseComp | 83.2 | 81.0 | 74.5 |

| API input cost (per 1M tokens) | $0.60 | $3.00 | $1.25 |

| API output cost (per 1M tokens) | $2.50 to $3.00 | $15.00 | $10.00 |

The agentic numbers are where the architecture work shows up. K2.6 can spin up 300 concurrent sub-agents and chain them across 4,000 coordinated steps without losing the thread, which is the kind of depth that breaks most current open-source agents inside the first 50 steps.

That is the same problem I covered in why AI agents lose focus earlier this year.

If you used the K2.5 OpenClaw setup a few months back, K2.6 runs on the same local stack with no migration work beyond pulling new weights.

Why This Is a Bigger Deal Than It Sounds

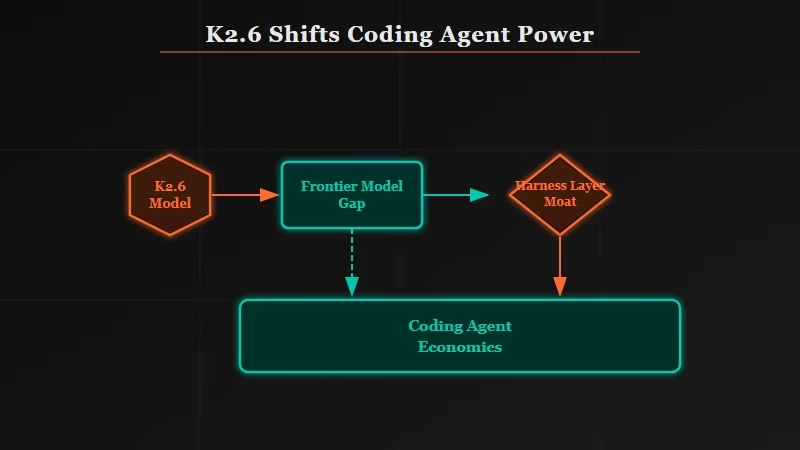

K2.6 matters because it is the first open-source model to match closed frontier labs on production coding benchmarks, eliminating the assumed 6 to 12 month lead and forcing every coding agent product to rethink pricing.

Frontier coding scores at a quarter of the price changes the unit economics of every coding agent product on the market.

If you are paying for Cursor or Claude Code at scale, your runway just got longer, or your margin just got better, depending on which side of the bill you are on.

The bigger structural shift is open weights at this benchmark tier. Until yesterday the implicit story was that closed labs would always hold a 6 to 12 month lead on coding, and open-source would catch up to last year’s frontier.

K2.6 collapses that gap to roughly zero on at least two of the three benchmarks that matter for production agent work.

What this tells me is that the moat for coding agents is no longer the model. It is the harness. Cursor, Claude Code, and Codex are not winning because they have better models, they are winning because they have better tooling around the model.

That harness layer is the next thing competitors will need to attack, and as I argued in is human coding really over, the gap between a frontier model and a usable coding agent is mostly the surrounding system.

What This Means for You

For anyone running coding agents in production, K2.6 means you should test it against your eval suite this week, evaluate a local-only workflow, and expect Anthropic and OpenAI to respond within a quarter.

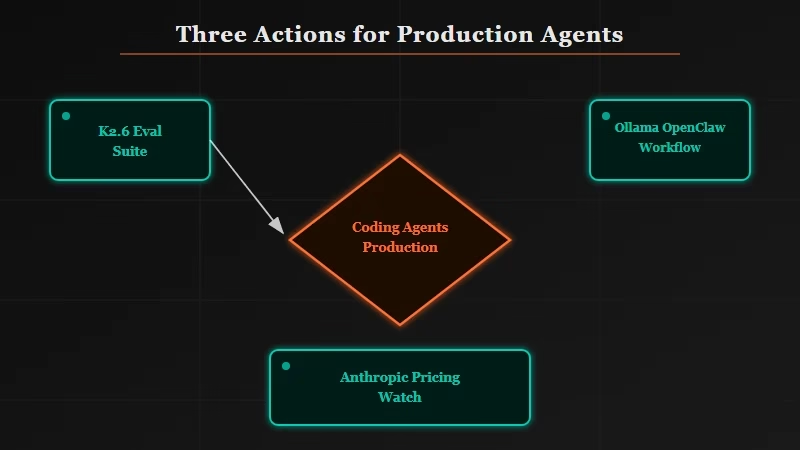

If you are running coding agents in production, three things to do this week:

- Run K2.6 against your existing eval suite. Benchmark scores tell you the average, but your codebase has a shape that benchmarks do not. Pull the weights, point your harness at the API, and rerun your last 50 real tasks. A model that drops to 40% of the cost while matching 95% of the quality is worth the migration friction.

- Look at your local-only workflow. K2.6 runs on the same Ollama and OpenClaw setup K2.5 used, which means you can run it offline on a Mac Studio or a workstation with enough VRAM. For anyone working under data-residency constraints, this removes the last reason to stay on a closed API for production coding.

- Watch what Anthropic and OpenAI do in the next 30 days. The OpenAI Codex computer-use release was already pushing into agentic territory, and now there is open-source pricing pressure on top of it. Expect Claude Opus pricing or context window changes inside a quarter.

What Comes Next

The likely next move is a K2.6-mini distilled variant within roughly six weeks, plus rapid backend support from Cursor, Windsurf, and other agent harness makers competing for the price-sensitive segment.

Moonshot has historically followed major releases with a smaller distilled variant about 6 weeks later, so a K2.6-mini at 70 to 100B parameters is the most likely next move.

That would put frontier coding within reach of a single high-end consumer GPU, which is the threshold where open weights actually displace API spend for indie developers and small teams.

The other thing to watch is how fast Cursor, Windsurf, and the other agent harness makers add K2.6 as a backend option.

The harness layer is the real product now, and the first one to cleanly support a $0.60 input model alongside Claude and GPT will pick up the price-sensitive segment of the developer market overnight.