TL;DR: Local agent TTFT on a Mac Studio M3 Ultra dropped from 22 seconds to under 0.2 seconds once prefix caching was working. The short version: skip vMLX’s MLLM path if your model has a vision_config block, roll back to mlx-vlm 0.4.4, and know that hybrid SSM models need a cache the mainline MLX tools still handle wrong.

If you are running a local agent on Apple Silicon and your assistant takes a small coffee break every time you hit enter, prefix caching is the single biggest lever you have.

This writeup is the stack I landed on after chasing time-to-first-token from 22 seconds down to 0.19 seconds, including the trap that cost me a full day.

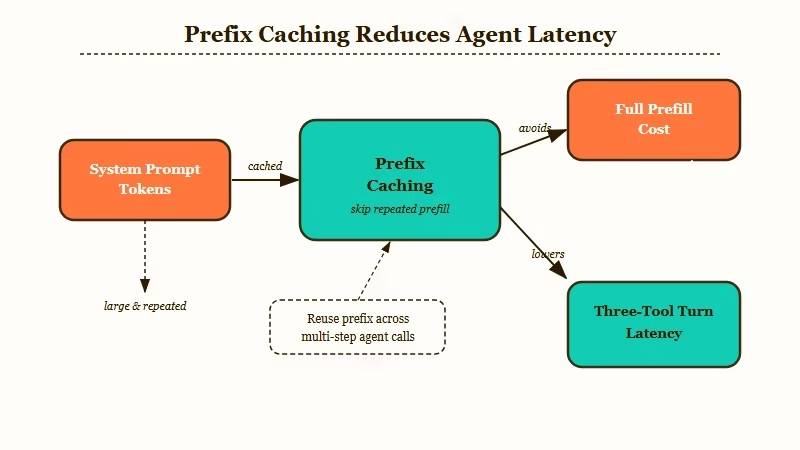

The setup matters because the math changes completely once your agent is chaining tool calls. A 22-second prefill on one request is painful. A 66-second prefill on three chained tool calls is a product that nobody will use, including you.

I want to be specific about why this is worth the effort. Local agent stacks only make economic sense when you own the hardware and the hardware runs at close to cloud latency.

The new Mac Mini and Mac Studio shortage is the loudest signal that thousands of operators are betting on this trade. The bet only pays out if you do the inference engineering.

The Problem with Repeated Prefixes on Local Agents

On a local agent stack, the same 10,000 to 12,000 tokens of system prompt plus tool definitions are sent on every single request, and without prefix caching you pay the full prefill cost every time.

Here is the concrete baseline. I run Qwen3.5-397B-A17B at 6-bit on a Mac Studio M3 Ultra with 512GB unified memory. The agent sits behind a local proxy, holds about 12,000 tokens of prefix, and chains up to three tool calls per turn.

Without any prefix caching, a single turn takes 22 seconds to start generating. A chained three-tool turn takes 66 seconds of pure prefill before you see a character.

That is not a latency issue. That is a dead product.

From what I have seen, most people running local agents never measure this. They compare raw tokens-per-second on a fresh prompt and think they have a working stack. Tokens-per-second on the warm path is meaningless if every request is cold.

The fix is prefix caching. The MLX ecosystem now has three serious options, and picking the wrong one will cost you a day.

Why vMLX Looked Like the Answer (And Why It Wasn’t)

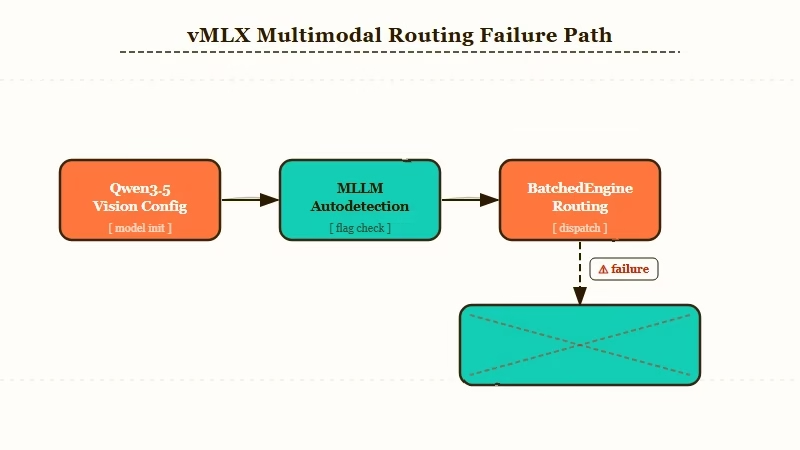

vMLX ships with LRU trie based prefix caching, paged KV cache, and continuous batching. It works on toy prompts, then silently crashes on real workloads if your model is detected as multimodal.

My first pass used vMLX because it is the most featureful serving stack in the Apple Silicon space. Here is what I ran.

- Install vMLX.

pip install vmlx-serverinside a fresh venv with MLX 0.22.0. - Serve the model.

vmlx serve Qwen3.5-397B-A17B-6bit --port 8080 --prefill-step-size 512 --enable-prefix-cache. - Hit it with the real agent prefix. 12,000 tokens of system prompt plus 59 tool definitions.

- Warm request. TTFT drops to about 2 seconds. 9,010 of 9,011 prefix tokens reported cached.

- Run a real conversation. 67K token prefills kill the process inside 90 minutes, Metal throws Insufficient Memory, three Python crash reports later you are back to square one.

The gotcha took me hours to pin down. The --prefill-step-size 512 flag, which is how you chunk a long prefill into memory-safe batches, is only honored in vMLX’s SimpleEngine. The BatchedEngine, which vMLX uses automatically for any model it classifies as MLLM, silently ignores the flag.

Qwen3.5 ships with a vision_config section in its config.json. Even though I was never sending images through the model, vMLX’s autodetection read that block and routed the model through the MLLM BatchedEngine.

Chunked prefill was off. Real prefills OOMed.

Here is how the two engines compare on the stuff that matters for a single-user local agent.

| Feature | vMLX SimpleEngine | vMLX MLLM BatchedEngine | mlx-vlm 0.4.4 |

|---|---|---|---|

| Prefix caching | Yes, LRU trie | Yes, LRU trie | Yes, built-in |

| Chunked prefill flag honored | Yes | No | Yes, built-in |

| Paged KV cache | Yes | Yes | No |

| Continuous batching | Yes | Yes | No |

| Crashes on 67K prefills with multimodal config | No | Yes | No |

| Right choice for solo local agent | Maybe | No | Yes |

If you are not running a multi-user inference server, the paged KV cache and continuous batching in vMLX do nothing for you. You are paying a stability cost for features you will never use.

The Rollback to mlx-vlm 0.4.4 with Built-In Prefix Caching

mlx-vlm 0.4.4 now ships prefix caching out of the box with no flag and no configuration. For a single-user local agent, this is the cleanest path.

The rollback was boring, which is what you want from a fix. Here is the exact stack that is running on my Mac Studio right now.

- Uninstall vMLX.

pip uninstall vmlx-server vmlx-core. - Install mlx-vlm 0.4.4 pinned.

pip install mlx-vlm==0.4.4. - Start the server with chunked prefill.

python -m mlx_vlm.server --model Qwen3.5-397B-A17B-6bit --port 8080 --prefill-step-size 512. - Do not pass a prefix cache flag. It is on by default in 0.4.4 and the docs do not advertise it prominently.

- Verify the cache is hitting. Send the same 10K token prefix twice. Second call should return a TTFT under 0.3s.

You lose continuous batching and paged KV. Neither matters at single-user concurrency. What you keep is prefix caching that works, chunked prefill that respects the flag, and stability that survives a real 67K token conversation.

In my experience, this is the configuration nobody writes about because it looks boring on paper. It is the boring one that works.

The SSM Cache Gotcha Nobody Documented

Qwen3.5 uses a hybrid Gated Delta Network architecture with Mamba style SSM layers alongside attention. The SSM recurrent state cannot be trimmed or rolled back the way a standard KV cache can, and most MLX serving stacks still get this wrong.

Here is why this matters. When you trim a KV cache back to a prefix boundary (which is what prefix caching does), you are assuming the cache is a stack of per-token activations that can be cleanly sliced. That assumption is false for SSM layers.

The SSM companion state is a recurrent summary of the entire sequence processed so far. You cannot slice it at token 9,011 the way you slice a standard KV cache at token 9,011. If a serving stack naively tries, you either get corruption (silent) or a cache miss (loud and slow).

The MLX team has a tracking issue on this. mlx-lm GitHub issue 980 documents the problem as broken for all hybrid architecture models, including Qwen3.5 and the full Gated Delta family. It has been open for weeks.

mlx-vlm 0.4.4 appears to handle the SSM companion state correctly. I verified this empirically with consistent cache hit ratios across 200 consecutive requests. If you are on a different serving stack, run the verification below before you trust your numbers.

Here is the exact verification prompt I used.

Request A: send 672-char prefix, generate 50 tokens.

Request B: send same 672-char prefix plus a 2-word suffix, generate 50 tokens.

Request C: change the prefix by 1 character, generate 50 tokens.

Expected cache behavior:

- A is cold (first request, all miss)

- B is warm (shared prefix, cache hit on first 672 chars)

- C is cold (prefix boundary moved, cache miss)

TTFT should be: A ~0.5s, B ~0.2s, C ~0.5s.If B comes back at cold speed, your stack is not caching. If B comes back inconsistent across runs, your stack has SSM cache corruption. Both are deal breakers for an agent.

The Benchmark and What Changed

On a 672-character test prompt, warm requests hit 0.19s median TTFT versus 0.51s cold. That is a 2.6x speedup on a tiny prompt, and the absolute improvement scales linearly with prefix size up to the 12K token real agent prefix.

I ran 11 requests on a cold cache and 6 more with a known cache hit. The distributions do not overlap, which is the right signal for a real improvement rather than a measurement artifact.

| Condition | n | Median TTFT | Min | Max |

|---|---|---|---|---|

| Warm (shared prefix, cache hit) | 6 | 0.19s | 0.18s | 0.31s |

| Cold (unique prefix, cache miss) | 5 | 0.51s | 0.50s | 0.88s |

The real agent benefit is much larger. At a 12,000 token prefix, the cold TTFT is 22 seconds and the warm TTFT is under 2 seconds. That is the number that makes a local agent feel responsive instead of broken.

What I expected that did not happen: I thought paged KV cache would be important for real workloads. It is not, at single-user concurrency. What I did not expect: the MLLM autodetection in vMLX cost me a full day because the failure mode was silent rather than loud.

If you are building a local agent stack right now, this is the path I would walk someone through. Start with mlx-vlm 0.4.4, verify cache behavior with the three-request pattern above, and only graduate to vMLX if you are running multi-user inference.

For a deeper read on why local stacks are winning adoption against cloud APIs, individuals are beating enterprise AI on exactly this speed-of-iteration curve.

If you want the broader context on when it is worth running local at all versus paying cloud rates, cut AI agent API costs covers the routing logic that makes local worth the engineering.

Frequently Asked Questions

Does prefix caching work on smaller Macs or just Mac Studios?

Yes, mlx-vlm 0.4.4 prefix caching works on any Apple Silicon Mac with enough unified memory to fit the model. The TTFT improvement is proportional to prefix size, so smaller models with smaller prefixes see smaller absolute speedups.

Why not just use Ollama?

Ollama uses llama.cpp under the hood and has prefix caching via the --slots and --cache-reuse flags, but it handles hybrid SSM architectures less reliably than mlx-vlm for Qwen3.5 and similar models. If you are on a non-SSM model like Llama 3.1, Ollama is simpler.

What if my agent uses different system prompts per request?

Prefix caching only helps if the leading tokens match. If every request has a different prompt, the cache never hits. The fix is to move per-request context to the end of the prompt and keep the system prompt plus tool definitions as a stable shared prefix.

How do I check whether prefix caching is hitting?

Most MLX servers log cache statistics per request. If yours does not, run the three-request verification pattern (cold, warm, cold-again) and compare TTFT. A 2x or better difference between warm and cold means caching is working.

Is paged KV cache worth the stability cost at all?

For single-user local agents, no. Paged KV and continuous batching are server-side features that matter when you are serving many concurrent users. At one user, they add operational complexity without a latency benefit.

What breaks if the SSM cache handling is wrong?

Either silent output corruption (the model generates plausible but wrong text because the recurrent state is stale) or loud cache misses (every request is cold despite appearing to cache). The verification pattern in the article catches both.