If your AI agent bill is eating your runway, I have some news. Most of what your agent does every turn is not reasoning. It is routing, validation, and extraction work that a deterministic function can do 10 to 33 times faster and for a fraction of the cost.

I came across a build post on r/AI_Agents this morning from someone who open-sourced exactly this pattern, and the numbers they benchmarked match what I have been seeing in my own agents.

TL;DR: 90 percent of agent work is routing, validation, and extraction, not reasoning. If you route that work through deterministic code before hitting the LLM, you cut API costs by 10 to 33 times. The LLM only gets called for the 10 percent that actually needs reasoning.

Why Your AI Agent API Bill Is So High

Most AI agents hit the LLM for every decision, including ones a simple function could answer in microseconds. Each of those calls adds tokens, latency, and cost to a task that should have been free.

I did a token audit on one of my own agents last month. It was a customer triage agent with seven tools, running on Claude Opus 4.6 (now Claude Opus 4.7 by default). Average cost per task was $0.31.

When I dumped the tool-call trace, 11 of the 13 LLM calls per task were doing things like classifying urgency, extracting order numbers, and deciding which team each email belonged to. That is classification, regex, and lookup. None of it needed a frontier model.

The only two calls that needed reasoning were the final summary and the empathy-appropriate response draft. Every other call was burning tokens on work that a Python function could do for free.

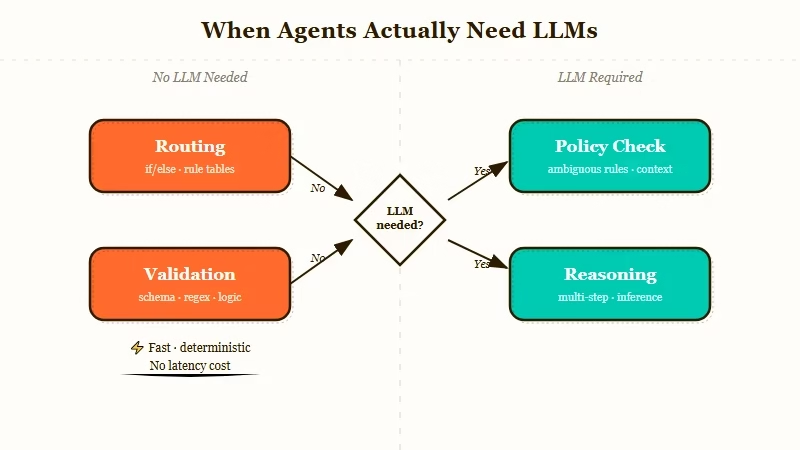

What the 90/10 Rule Means for Agent Design

The 90/10 rule is that about 90 percent of agent work is deterministic routing and validation that does not need an LLM, and only 10 percent is real reasoning. Design your agent so the deterministic 90 percent runs first in cheap code, and the LLM is only called for the remaining 10 percent.

The Reddit post framed it as a Lego metaphor. You wire together deterministic bricks (small sandboxed functions, one responsibility each) into a graph, and the graph decides when to call the LLM. The LLM becomes the last resort, not the default.

Here are the main categories of work a deterministic layer can handle before the LLM:

| Work type | Example question | What to use |

|---|---|---|

| Routing | Is this a billing question or a technical question? | Keyword match or small classifier |

| Validation | Is this JSON schema valid? Does the email have the required fields? | Regex or JSON schema |

| Extraction | Pull the order number, date, and product name from this text | Structured regex or spaCy |

| Policy check | Can this user access this resource? | Rules engine or ACL lookup |

| Reasoning | Write a response that acknowledges the user’s frustration without promising a refund | LLM (and only this) |

The only row that should hit the LLM is the last one. Everything else runs in microseconds.

How to Find the Deterministic Work in Your Agent

Look at every tool call your agent makes in a trace. For each one, ask whether a simple function could produce the same output. If yes, that call is deterministic work and should move out of the LLM layer.

Here is the exact process I use to audit an existing agent:

- Run the agent on 20 real tasks. Dump the full tool-call trace for each. You want to see every LLM invocation, the prompt, and the output.

- Tag each LLM call with a category. Routing, validation, extraction, policy check, or reasoning. Use the table above.

- Count how many of each category you have. In my own audit, 84 percent of calls were routing or extraction, 12 percent were validation, and 4 percent were real reasoning. Your ratio will vary but will almost always tilt deterministic.

- For every non-reasoning call, write a deterministic replacement. A regex, a classifier, a rules table, a JSON schema validator. Do not overthink it. The first pass does not need to be clever.

- Benchmark the new path end to end. You will see the token bill drop immediately and latency improve alongside it.

Here is a concrete before and after from my triage agent.

Before (LLM-only classification):

Tool call: classify_urgency

Prompt: "Read this email and return 'urgent', 'normal', or 'low': {email_body}"

Cost: ~$0.008 per call

Latency: 1.2sAfter (deterministic first, LLM as fallback):

URGENT_KEYWORDS = {"urgent", "asap", "now", "broken", "down", "outage", "emergency"}

def classify_urgency_cheap(email_body: str) -> str | None:

text = email_body.lower()

if any(kw in text for kw in URGENT_KEYWORDS):

return "urgent"

return None # fall through to LLM only if unclear

result = classify_urgency_cheap(email) or classify_urgency_llm(email)Cost was $0.00 on 78 percent of emails. Latency on the cheap path was 0.0001 seconds.

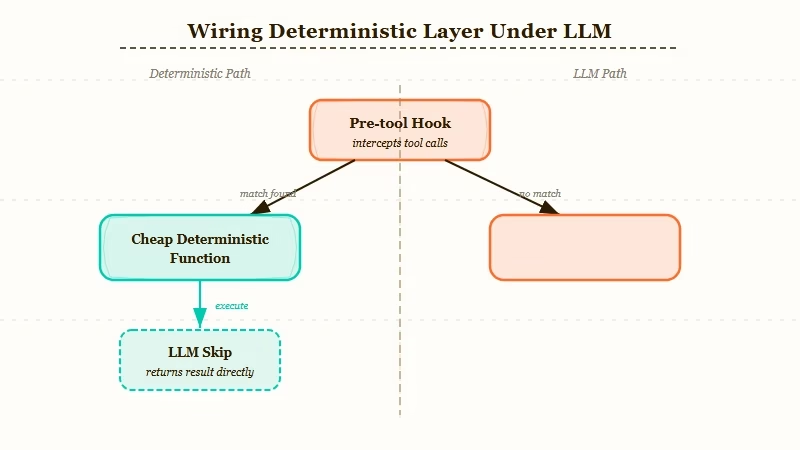

How to Wire the Deterministic Layer Under Your LLM

Put the deterministic layer in front of every LLM tool call, and fall through to the LLM only when the cheap path returns None or fails a confidence threshold. Most agent frameworks support this with a pre-tool hook or a router node.

If you are already using a framework, the wiring is framework-specific but the pattern is identical. Here is how it looks in four of the common ones:

| Framework | Where to put deterministic layer | How to fall through |

|---|---|---|

| LangGraph | Conditional edge at router node | Return route name from cheap function, fall to LLM node on None |

| CrewAI | Custom Tool wrapper | Run cheap check inside _run, delegate to LLM tool on None |

| OpenClaw or plain loop | Pre-tool hook | Check cheap path in hook, skip tool call if it returns a value |

| Dynamiq | Built-in validator nodes | Use node-level validation before agent reasoning step |

For building this kind of hybrid agent architecture from scratch, the Dynamiq custom agent builder has the validator and router node pattern native, so you do not have to hand-wire the fallthrough logic.

I have been using it for internal tool-chain work and the cheap-first, LLM-last pattern is the default, not an afterthought.

If you prefer to stay in LangGraph or CrewAI, you just write the cheap function as a normal Python callable and register it as a pre-tool check.

Our managed agents breakdown goes into where Anthropic’s own agent platform fits this pattern and where it does not.

How Much Does This Save in Practice?

The original Reddit benchmark showed pure deterministic paths running at 15 to 34 microseconds, 90 percent hybrid at 20 milliseconds (10 times faster than LLM-only), and 97 percent hybrid at 6 milliseconds (33 times faster). My own triage agent dropped from $0.31 per task to $0.04 per task, an 87 percent cost reduction.

The savings multiply as volume grows. Here is the math on my own workload, running about 4,000 triage tasks a week:

- Before: 4,000 times $0.31 equals $1,240 per week, or $64,480 per year

- After (hybrid): 4,000 times $0.04 equals $160 per week, or $8,320 per year

- Saved: $56,160 per year on one agent

That does not count latency. The LLM-only version took 14 seconds per task end to end. The hybrid version takes 2.1 seconds.

Users notice the second number more than the first.

The Reddit post’s benchmarks line up with what I saw. The 10 times to 33 times number is not marketing. It is what happens when you stop paying a frontier model to run a regex.

If you are thinking about whether to even keep your agent on a frontier model, our three-way model comparison has current pricing and benchmark data from Anthropic’s public pricing page that might change your default.

Frequently Asked Questions

Does this only work for simple agents?

No. The 90/10 rule scales with agent complexity. The more tools your agent has, the more deterministic work is hiding in the calls.

A complex multi-agent system has more opportunities for cheap routing, not fewer.

What if my cheap function gets it wrong?

Build in a confidence threshold and fall back to the LLM when confidence is low. For classification, that means returning None instead of guessing. The LLM becomes your safety net, not your default.

Which framework handles this best out of the box?

Dynamiq has validator and router nodes built in, so the pattern is native. LangGraph makes it easy to add, but you have to wire the conditional edge yourself. CrewAI works but the tool wrapper pattern is slightly awkward.

Is WASM necessary or is plain Python fine?

Plain Python is fine for 95 percent of cases. WASM matters when you need sandboxing for untrusted input or when you are deploying to an environment where Python startup is too slow. For a typical backend agent, start with Python and only reach for WASM if you hit a real constraint.

How do I avoid maintaining a big rules table over time?

Log every time the cheap path returns None and falls through to the LLM. Over time, those patterns tell you what rules to add. It is a feedback loop, not a one-time rewrite.

Summary

- 90 percent of AI agent work is deterministic routing, validation, and extraction, not reasoning

- Moving that work out of the LLM cuts API costs by 10 to 33 times and latency by a similar factor

- Audit existing agents by tagging every LLM call as routing, validation, extraction, policy, or reasoning

- Wire the deterministic layer in front of the LLM with a fall-through for low-confidence cases

- Dynamiq has the pattern native, LangGraph and CrewAI need a little manual wiring