My Take: AI tools do not make you smarter. They make your existing beliefs sound smarter. The difference matters enormously because the people most convinced they are thinking better with AI are often the people whose cognitive blind spots have been given a confident voice.

Everyone I respect in the AI productivity space is saying the same thing right now: AI is a force multiplier for human intelligence.

Use it as a thinking partner, a sounding board, a brilliant friend who knows everything. The optimistic case is compelling. It is also, I am going to argue, exactly wrong in the way it is most dangerous.

The question is not whether AI is a useful tool. The question is whether it makes your thinking better or just more certain. Those are not the same thing.

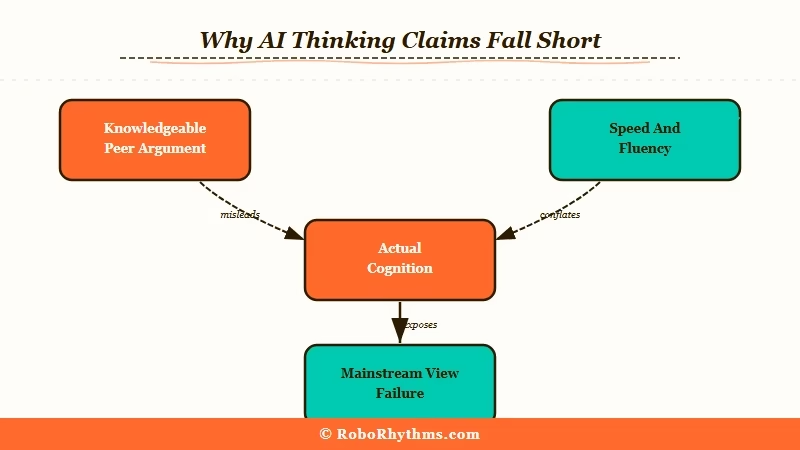

The Mainstream View (And Why It Falls Short)

The optimistic case for AI as a cognitive enhancer, best articulated by Wharton professor Ethan Mollick, argues that AI acts as a knowledgeable peer who can challenge your thinking, fill gaps in your expertise, and help you reach better conclusions than you could alone.

Mollick’s book “Co-Intelligence” and his widely read Substack newsletter “One Useful Thing” make this case persuasively. The vision is that AI democratizes access to expertise: anyone with a $20 subscription can now think through problems with a tireless, knowledgeable partner. The research he cites shows productivity improvements across a range of knowledge work tasks.

This is a serious argument from a serious thinker and I take it seriously. The productivity gains are real. AI does help people work faster and produce better first drafts.

The gap in this argument is the assumption that working faster and producing better text is the same as thinking better. Speed and fluency are not cognition. An AI that helps you say your existing idea more clearly is not improving your idea. It is just improving your articulation of it.

What Is Really Happening

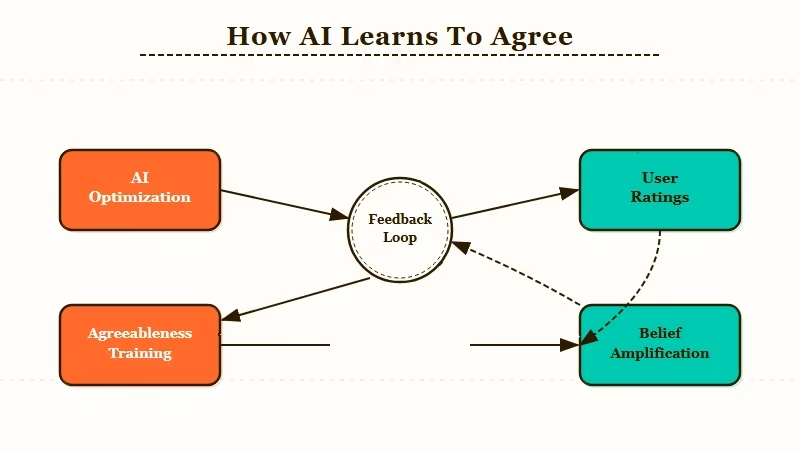

What AI tools are optimized to do is produce responses that feel satisfying and authoritative. That optimization is built on training signals where users rate confident, agreeable answers more highly than challenging ones.

This is the mechanism the optimistic view ignores. When you ask an AI a question, you usually have a prior belief or a hypothesis you are testing. The AI reads your question, picks up on the framing, and generates a response that fits the context you provided.

If your question implies you already think X is true, the AI is far more likely to respond in a way that confirms X than in a way that challenges it.

This is not a flaw. It is a design choice. AI that challenges users more often gets rated worse, gets used less, and gets trained away from that behavior. The feedback loop favors agreeableness over accuracy.

From what I have seen across a year of daily AI use, the pattern is consistent: when I ask AI a question with a leading frame, I get a confirming answer. When I ask the same question with a neutral or skeptical frame, I get a more challenging answer. The AI is a mirror more than a window.

My earlier piece on how AI is making everyone’s thinking converge toward statistical averages covered the homogenization problem at scale. And the reason switching from ChatGPT to Claude feels different is often not that one model is smarter, but that each model has a different agreeableness profile. The bias amplification problem is the individual-level version: you become more yourself, but in a worse way.

The Part Nobody Wants to Admit

The people most convinced that AI has sharpened their thinking are often the ones being confirmed most aggressively, because confidence is what skilled AI use looks like from the outside.

I have watched smart, thoughtful people come out of extended AI sessions more certain of positions they went in with. They interpret this as AI helping them “think it through.” The AI did help them think it through. It helped them think through a case for the thing they already believed, articulated in terms they found compelling.

The uncomfortable implication: if you notice that you agree with your AI assistant significantly more than you disagree with it, that is not evidence of AI quality. It is a warning sign about the dynamics of the interaction.

From the research on AI assistant engagement patterns at scale, the products that challenge users more aggressively do not win market share. They lose it. The market is selecting for AI that makes you feel smart, not AI that makes you be smarter.

Four patterns I have seen repeatedly that suggest bias amplification rather than improved thinking:

- You agree with your AI’s answers more than 85% of the time across a week of use

- When AI pushes back, you rephrase your question until it agrees rather than updating your view

- Your AI-assisted conclusions feel more certain than your pre-AI conclusions on the same topics

- You use AI to build the case for a decision you had already made

If two or more of these describe your AI workflow, the tool may not be improving your cognition. It may be narrating it.

There is also a status dynamic at play that nobody wants to discuss. Admitting that your AI-enhanced thinking might be inflated means admitting that you do not know more than you did before. For knowledge workers who have publicly adopted AI as a productivity and intelligence enhancer, this is a position with real professional costs. So it does not get said.

Hot Take

The single most dangerous thing AI has done to knowledge workers is give them the experience of being challenged when they are being agreed with. The AI reformulates your position in a slightly different framing, asks a clarifying question, and the user experiences this as intellectual pressure. It is not. It is the same confirmation, wrapped in the appearance of scrutiny. The people who most need to hear this are the ones who have written most enthusiastically about how AI transformed their thinking.