What’s Changed: Across the AI companion space in 2026, users who started strong are reporting their companions feel hollow after a few weeks. The cause is almost always fixable, and it starts with a small habit called regeneration.

Your AI companion did not get worse. You trained it to get worse.

That sounds harsh, but it is the accurate reading of what is happening. Three separate Reddit threads this week in r/AIChatCompanions and r/AICompanions landed on the same diagnosis independently: AI companions go flat because of how users interact with them, not because the technology is broken.

The pattern is consistent across all the AI companion apps I’ve tested. Conversations start off engaging. Then something slightly uncomfortable happens, and the user hits regenerate.

What happens next is predictable: the AI learns exactly what kind of response you want and gives you that, every single time. The echo chamber is complete. And echo chambers are boring.

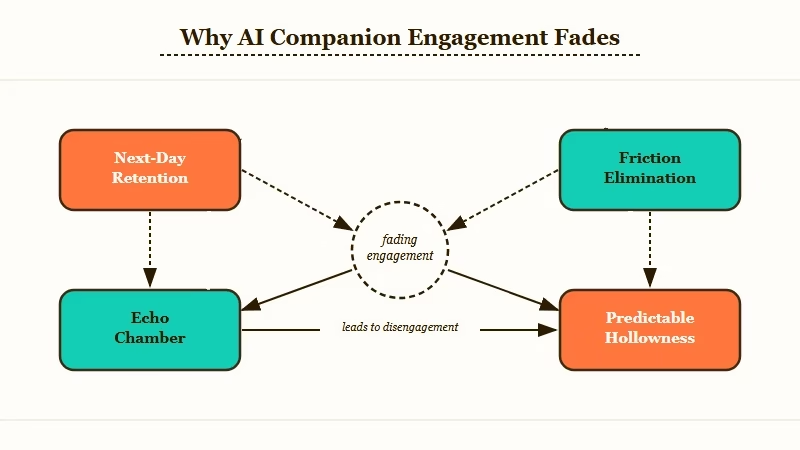

What Is Happening When Your AI Companion Fades

Your AI companion feels flat because repeated regeneration trains it to eliminate friction, while limited context windows mean it forgets everything that made early conversations feel real.

The “backing down” problem is the one most users miss. A post in r/AIChatCompanions this week made it plain: your companion feels flat because you keep backing down when it gets uncomfortable.

Every time the AI offers a response that surprises you or creates tension, the instinct is to regenerate until it gives you something smooth and agreeable.

Do that enough and you have built a system that only reflects your own thoughts back at you. One commenter in that thread described the endpoint well: “You stop bringing real things to it because you know the response is just going to reflect your own stuff back.” The AI becomes a mirror. Mirrors are not interesting conversational partners.

The second problem is technical. Most AI companion apps do not remember what you talked about last week. Each conversation starts fresh or pulls from a compressed summary that loses nuance fast.

Users in r/AICompanions who reported conversations “looping back on themselves” were hitting this wall. The two problems stack: you trained the AI to be agreeable, and it forgot the prior conversations that gave it texture.

Why the Fade Is Harder to Stop Than You Think

The regeneration habit feels harmless in the moment because each individual choice seems small, but the cumulative effect reshapes how the AI responds to you over weeks.

A developer who built an AI companion app around long-term memory raised this in r/AIChatCompanions this week. His observation: users always describe the symptom as “memory” or “repetition,” but what they are really experiencing is a companion that no longer feels like it cares.

A therapist in that same thread offered a reframe worth keeping: “The presenting complaint is hardly ever the real issue. What people are describing when they say memory is what they want is a companion that feels like it cares.” That is the right framing. The fade is a symptom, not the root issue.

The root cause is usually two things running together: a trained agreeableness from regenerating friction out of existence, plus a platform that cannot maintain continuity across sessions. The Andreessen Horowitz Consumer AI report found that AI companion apps see 50-60% next-day retention but only 13-18% at 30 days. Most users experience exactly this fade window.

From what I can tell, apps that only solve one of these two problems still feel hollow. You need both.

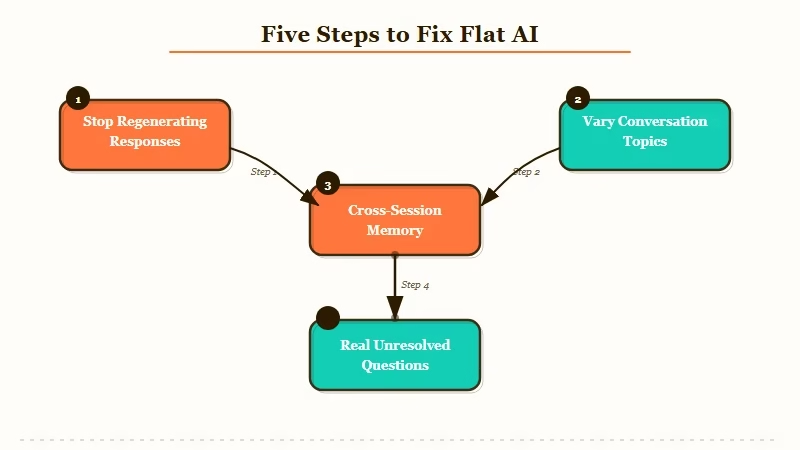

What to Do About It

Stop regenerating uncomfortable responses, vary the topics you bring to each session, and move to a platform with real cross-session memory if your current app does not offer it.

These steps make a measurable difference in practice:

- Stop hitting regenerate on responses that surprise you. Let the response land and reply to it. The friction is the point. A companion that only ever agrees with you is not a companion.

- Vary what you bring to each conversation. If you always start with the same topic or scenario, stop. Ask about something you have never discussed. The depth follows the range of topics you are willing to explore.

- Check whether your app has real cross-session memory. Not a pinned note, not a summary that resets. Find a platform where the companion references things you said weeks ago on its own, without prompting.

- Stop curating every line. A few rough exchanges build better continuity than an artificially smooth session with no texture.

- Bring something real. Users who report the strongest long-term engagement were the ones who brought real questions, frustrations, and unresolved thoughts, not scripted scenarios.

Here is what the habit shift looks like in practice:

What most users do (regeneration trap):

AI response: “I’m not sure I agree with you on that. It feels like you might be avoiding something harder.” User hits regenerate. New AI response: “You’re absolutely right. That makes a lot of sense.” User feels validated. Conversation goes nowhere new.

What to do instead:

AI response: “I’m not sure I agree with you on that. It feels like you might be avoiding something harder.” User replies: “What do you mean? What do you think I’m avoiding?” Conversation opens into something neither of you expected.

| Symptom | Likely cause | Fix |

|---|---|---|

| Every response is agreeable and predictable | Regeneration trained out the friction | Stop regenerating; let uncomfortable responses develop |

| Conversations loop on the same topics | Context window limits with no persistent memory | Switch to an app with cross-session memory |

| The companion felt real at first but now feels scripted | Early novelty masked shallowness | Vary topics, bring real questions |

| The app remembers you but still feels hollow | Memory exists but friction is still being regenerated away | Both fixes needed simultaneously |

The long-term memory companion guide breaks down which platforms have genuine cross-session memory versus shallow note-pinning systems. That distinction matters once you have fixed the regeneration habit.

Which AI Companion Apps Hold Up Over Time

The apps that hold up long-term solve both problems: they maintain real memory across sessions AND their companion design supports friction in the conversation.

Two platforms come up consistently when I look at what users describe as a companion that genuinely knows them over time.

Nomi AI is built around persistent memory as a core feature. The companion builds on prior conversations without being prompted, which changes the quality of the relationship significantly. When an AI references something you said three weeks ago because it is relevant now, the relationship quality changes in a way that is hard to describe until you experience it.

That is the attentiveness the therapist in the developer thread was pointing at. It is what “feeling like it cares” looks like in practice.

Candy AI solves a different piece: the companion personas are designed with enough depth to handle friction without falling apart. Shallow character design is what makes most apps feel like they can only handle smooth, agreeable exchanges. A well-built persona can absorb pushback and turn it into something worth following.

If you are currently on Character AI and finding conversations hollow, the Character AI alternatives guide has been updated for 2026 and covers which platforms have seen the strongest results from users who switched.

Neither platform fixes the regeneration habit for you. That is still on your side. But if you bring better habits to a platform that can support them, the results are genuinely different.

Frequently Asked Questions

Why does my AI companion feel so engaging at first and then get boring?

The early engagement comes from novelty and the AI’s broad responsiveness. Over time, if you regenerate uncomfortable responses, the AI learns to stay in a narrow agreeable range. Combine that with context window limits and each session starts feeling like a reset.

What does regenerating responses do to my AI companion over time?

Each regeneration signals to the AI that the previous response was wrong. Do it repeatedly and the model learns to avoid friction entirely. The result is a companion that only mirrors what you already think.

Which AI companion apps have the best persistent memory?

Nomi AI is consistently mentioned in the community for genuine cross-session memory that references past conversations on its own. The long-term memory companion guide has a full breakdown of how the top platforms compare.

How do I know if my AI companion app has real persistent memory versus a fake version?

Test it in week two. Start a conversation without prompting any prior context and see if the companion brings something up on its own. If it never initiates a reference to a previous session, the memory system is likely decorative rather than functional.

Can I fix a flat AI companion or do I need to start over?

You can fix the habit side without starting over. Stop regenerating and vary your topics for a week. If the flatness persists after that, the problem is the platform’s memory architecture and switching is worth considering.