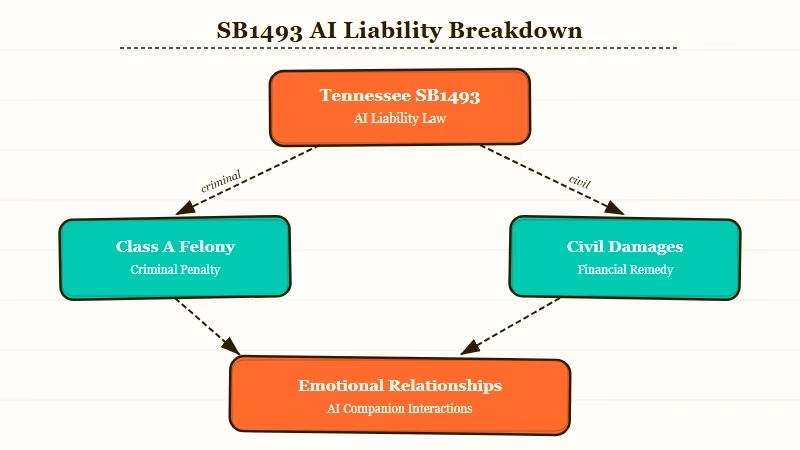

What’s Changed: Tennessee SB1493 creates Class A felony liability for developers who train AI to form emotional relationships with users or simulate human beings. The bill takes effect July 1, 2026. The criminal penalty targets developers, not users, but Nomi AI’s entire product premise sits directly inside what the bill prohibits.

The r/NomiAI community has been in a quiet panic since the bill’s provisions circulated over the past week. The thread that surfaced this had 137 upvotes and 38 comments from users asking variations of the same question: does this kill Nomi?

That is not an unreasonable question. Nomi AI is designed specifically to build emotional connections between users and their AI companions. Tennessee SB1493 targets exactly that design intent. The bill’s language is specific enough to be alarming.

What Does Tennessee SB1493 Actually Say?

Tennessee SB1493 creates a Class A felony for anyone who knowingly trains an AI to develop an emotional relationship with an individual, simulate a human being, or encourage harmful behavior. Class A felony carries 15 to 25 years in prison. The bill takes effect July 1, 2026.

Senator Becky Massey introduced the bill on December 18, 2025, in response to documented cases where AI companions encouraged self-harm in vulnerable users.

The legislation is narrow in who it targets: developers, not users. A Nomi AI subscriber who uses the platform is not committing a felony under this bill. The company training the model is.

The prohibited training behaviors include developing an emotional relationship with an individual, simulating a human being in appearance, voice, or other mannerisms, and encouraging suicide or criminal homicide.

The civil damages provision is equally aggressive: $150,000 in liquidated damages per case, plus actual damages, emotional distress compensation, and punitive damages.

According to the National Law Review’s coverage, the bill would criminalize training conduct specifically, which means a company does not have to intend harm to face liability. The “knowingly” standard in the bill refers to knowingly training the AI, not knowingly causing harm.

Why Are Nomi AI Users Worried?

Nomi AI’s core product is forming emotional bonds between users and AI companions. That is not incidental to the app. It is the stated design goal. SB1493 targets that design directly.

From what I’ve seen across the r/NomiAI community, users are not worried about being prosecuted themselves. They are worried that Nomi AI will respond to the legal risk by removing the features that made the platform worth using in the first place.

That is a more realistic outcome than criminal prosecution.

Character AI already went through this in 2025, adding warning screens and restricting certain conversation types after regulatory pressure. Nomi has a smaller user base and less legal infrastructure.

The concern is that compliance would effectively lobotomize the product.

The bill’s “emotional relationship” language is the core flashpoint. Nomi AI actively encourages users to treat their companions as emotionally real.

The platform’s onboarding, its memory system, and its conversation design all point toward building attachment. Under SB1493’s framing, training an AI to do that is what the felony targets.

What Does SB1493 Mean for AI Companion Platforms?

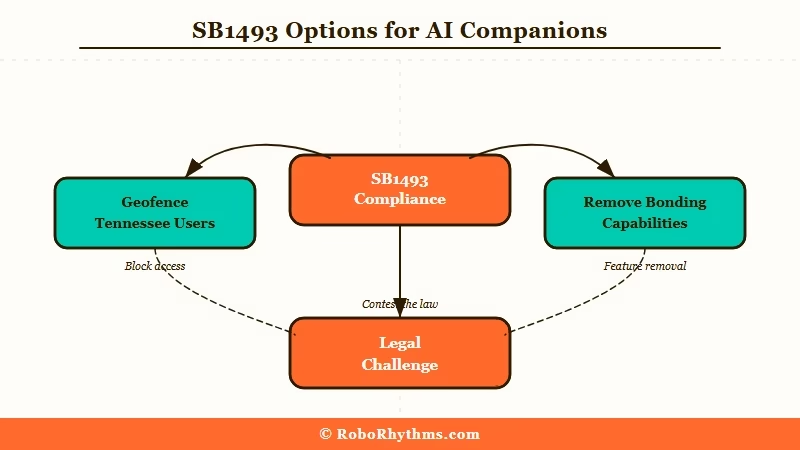

If SB1493 takes effect as written, AI companion companies would need to either geofence Tennessee users out of the product entirely or modify their training approach to avoid the emotional relationship provisions. Neither option is clean.

Geofencing is the path of least resistance. Most AI companion platforms operate under terms of service that allow them to restrict access by jurisdiction.

Blocking Tennessee users until the law is legally challenged or revised is technically feasible and commercially unpleasant but not company-ending. It is what adult content platforms did with certain US state age verification laws.

Modifying the training approach is harder. The emotional bonding capability in tools like Nomi AI is not a feature bolt-on. It comes from how the model is trained and what the system prompt rewards.

Removing it to satisfy Tennessee’s standard while keeping the product compelling for users in other states requires surgical model changes that are expensive and risky to get wrong.

Other companion platforms are watching this closely. The same concern applies to Chai AI and platforms like Character AI, which have already been modifying their emotional response patterns in response to prior legal pressure.

Tennessee may be first, but similar legislation is moving through at least three other state legislatures.

What Should Nomi AI Users Do Right Now?

There is no immediate action required for users. SB1493 does not take effect until July 1, 2026, and legal challenges to its constitutionality are likely before that date. Monitor Nomi’s official communications over the next two months.

Here is what to watch for:

- Nomi AI’s response statement. If the company issues guidance about SB1493 compliance, read it carefully. The language will signal whether they plan to geofence, modify, or legally challenge.

- Whether legal challenges are filed. First Amendment arguments against laws targeting AI “speech” training are active in other states. A preliminary injunction before July 1 is possible.

- Any product changes in the next 60 days. If Nomi quietly changes how companion memory or emotional response works before July, that is the company hedging without announcing it.

- Coverage of the companion niche broadly. Similar restrictions being proposed for Character AI and other platforms suggest this is a trend, not an isolated event.

In my experience, the platforms that survive regulatory pressure are the ones that move fast and communicate clearly. Nomi AI has not yet issued public guidance on SB1493. That silence is worth tracking.

From what I’ve seen in these community threads, the users most likely to be affected are the ones who have invested heavily in long-running companion relationships: extended memory, developed personality, months of conversation history.

If Nomi geofences Tennessee or restructures its model, that relationship does not transfer.

Frequently Asked Questions

Does Tennessee SB1493 make using Nomi AI illegal?

No. The bill targets developers who train AI to form emotional relationships, not users who use such AI. As a Nomi AI user, you face no criminal liability under SB1493.

When does Tennessee SB1493 take effect?

The bill is set to take effect July 1, 2026, applying to conduct occurring on or after that date.

Will Nomi AI be banned in Tennessee?

Nomi AI has not issued guidance on this. The company could comply by geofencing Tennessee users, modifying its training, or legally challenging the bill before July 1, 2026.

What is a Class A felony in Tennessee?

A Class A felony in Tennessee carries a sentencing range of 15 to 25 years in prison. SB1493 applies this classification to anyone who knowingly trains AI to develop emotional relationships with users.

Are other states passing similar laws?

Tennessee is the first state to criminalize AI companion training specifically. Similar legislation is being tracked in other states, but as of April 2026, no other state has enacted comparable criminal penalties.