My Take: The Trump administration banned Anthropic’s Claude from federal agencies in February 2026 and called its safety restrictions “corporate virtue signaling.” Then the Pentagon moved to integrate Grok. The mainstream framing is wrong. This is not about safety guardrails. It is about who controls the system.

The word “virtue signaling” is doing a lot of work in the current AI safety debate.

In February 2026, the Trump administration ordered federal agencies to stop using Anthropic’s Claude. The stated reason was that Anthropic’s safety restrictions were “corporate virtue signaling” that handicapped American competitiveness. The administration’s preferred alternative, from what has been reported, trends toward systems with fewer constraints.

What I find interesting is how quickly this framing was adopted. “Virtue signaling” implies that the person expressing the concern does not actually hold the concern. It implies performance rather than belief.

If you accept that framing, safety restrictions are just theatrical. If you reject it, you have to ask what is really happening when a government labels a company’s product refusals as performance art.

The Mainstream View and Why It Falls Short

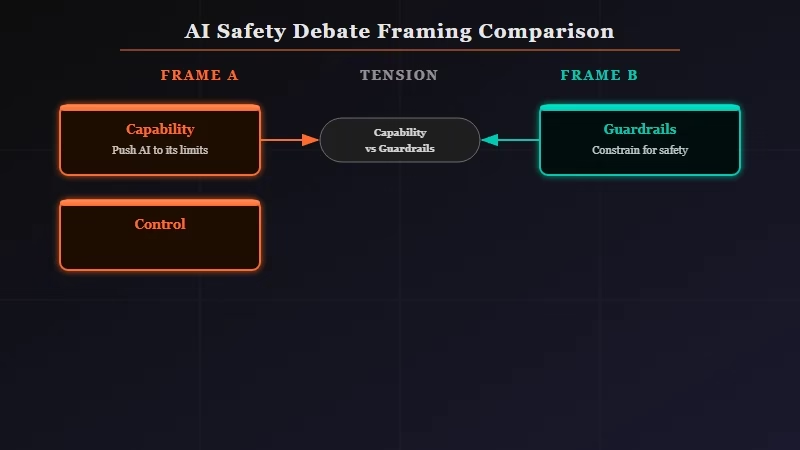

The mainstream AI safety debate in 2026 frames the tension as capability versus guardrails: more restrictions make AI less useful, fewer restrictions make it more powerful.

The Council on Foreign Relations put this framing clearly in a recent analysis, arguing that 2026 will decide how AI is governed and that the key variable is whether safety frameworks slow down American development.

This is a real tension. Anthropic did refuse to remove restrictions that would allow Claude to operate in fully autonomous weapons systems. Dario Amodei specifically said the current systems are not reliable enough to engage targets without human oversight.

The mainstream framing interprets this as a competitive disadvantage. The US military needs the best available tools. If Anthropic will not remove restrictions, the Pentagon should use tools that will.

The problem is that the capability-versus-guardrails frame treats the AI system as the unit of analysis. It asks “which AI is most capable?” It does not ask “who controls the AI?”

The Claude Code source leak published today is a useful example of the same pattern. The six findings inside the leaked code reveal deliberate product decisions about attribution, anti-distillation, and autonomous operation. Those are not safety features or capability features. They are control features.

What Is Really Happening Here

The question 2026 is forcing is not about what AI can do but about who owns and controls the AI systems being deployed into critical infrastructure. Guardrails are a feature. Concentrated ownership by someone with simultaneous political power is the structural variable that matters.

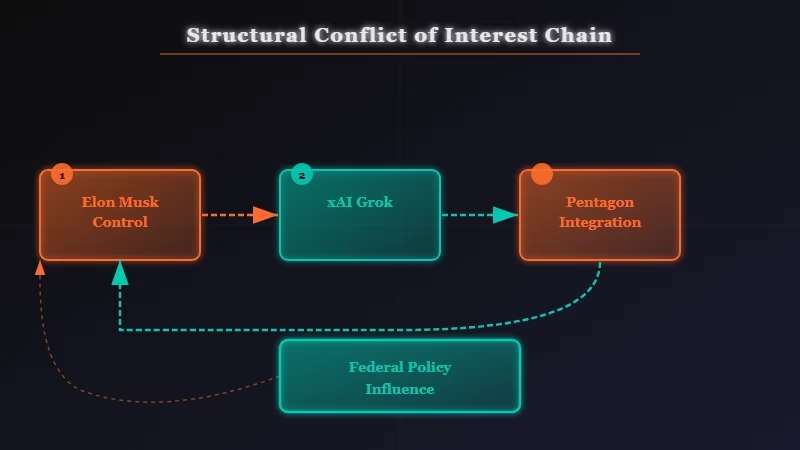

Grok is made by xAI, which is controlled by Elon Musk. In early 2026, Musk also leads DOGE, has significant influence over federal agency operations, and has been involved in decisions about what software government agencies use.

The Pentagon integrating a system controlled by someone who simultaneously holds significant advisory power in the government that manages the Pentagon is not primarily a question about content filters. It is a question about conflict of interest at scale.

I would make this argument regardless of who the person was. If Dario Amodei ran a parallel advisory role in the federal government while Anthropic’s models were being pushed as the mandatory alternative to Grok, I would say the same thing. The risk is structural, not ideological.

The “virtue signaling” framing is specifically designed to end this conversation before it starts. It reframes a structural concern as a performative one. Once you are arguing about whether Anthropic genuinely cares about safety or is just performing care, you have stopped asking who benefits from which deployment decision.

The Part Nobody Wants to Admit

Anthropic’s refusal to remove restrictions is commercially rational as well as principled, and the fact that it is both does not make it hypocritical. A company can have correct incentives and correct values at the same time. The “virtue signaling” accusation only lands if you insist that genuine belief requires financial sacrifice.

Anthropic has a business reason to maintain its safety positioning. The same way skilled users of Claude Code tools have found that the safety constraints are often features rather than limitations in a production context. It differentiates the product. Institutional buyers, regulated industries, and government contractors outside the current administration care about liability exposure.

But that commercial rationale does not make the underlying position wrong. A doctor can charge for treating a patient and still be a good doctor. The argument that safety restrictions are fake because they happen to be profitable is not an argument.

From what I have read in the coverage of both the federal ban and the Grok integration, the people making the “virtue signaling” case have not presented an argument that the safety concerns themselves are unfounded. They have presented an argument that the company expressing them has impure motives. Those are not the same claim.

The part nobody in the current debate wants to say clearly is this: the real risk in 2026 is not that AI systems have too many restrictions. It is that the entities with the most to gain from removing restrictions are the same entities now in a position to make that decision for the rest of us.

Hot Take

The most dangerous AI in 2026 is not the one that refuses to answer certain questions. It is the one owned by someone who can decide which questions are allowed.

If you want to understand what “control features” look like under the hood, the MCP automation guide shows the layer of infrastructure that sits between AI reasoning and the actions it takes. That gap between what an AI decides and what it can do is where the real control lives.