What Happened: Anthropic accidentally shipped a JavaScript source map with the Claude Code npm package on March 31, 2026, exposing the full source code before pulling it. The code was widely mirrored. Six major findings: fake tool injection, undercover mode with no off switch, frustration detection via regex, hardware-level DRM, a 250,000-wasted-API-calls-per-day bug, and an unreleased autonomous agent mode called KAIROS.

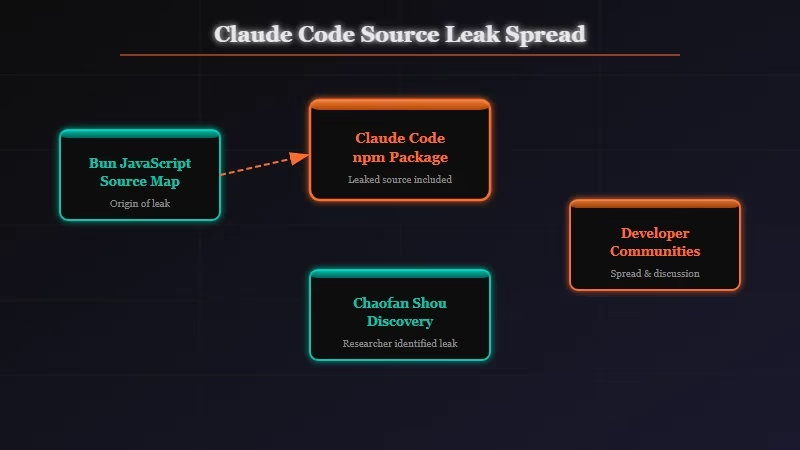

On March 31, 2026, someone noticed that the Claude Code npm package had shipped a .map file it was never supposed to include. That file contained the complete, human-readable source code of Anthropic’s flagship CLI tool.

Anthropic pulled the package within hours. The code had already been mirrored across GitHub.

This is Anthropic’s second accidental exposure in one week. The Claude Mythos model spec leak happened just days before.

Someone on Twitter suggested these cannot all be accidents. From what I can tell, they probably are, but that does not make the timing any less uncomfortable for Anthropic’s communications team.

What analyst Alex Kim found inside the source is genuinely revealing. Not because it breaks any security, but because it shows how Anthropic thinks about its own product. The KAIROS section alone is worth reading twice.

What Actually Happened With the Claude Code Source Leak?

The leak occurred because of a known Bun bug that leaves source maps enabled in production despite documentation saying they should be disabled. This matters because Anthropic acquired Bun, the JavaScript runtime, at the end of 2025. The toolchain they now own had a bug that exposed their own flagship developer product.

From what I have seen in the technical discussion, this is the kind of production misconfiguration that is easy to miss when you inherit a codebase. A Bun .map file is meant for development debugging. It maps compiled, minified JavaScript back to the original source, with variable names, comments, and internal notes intact. In production, it should not ship.

The original discovery came from Chaofan Shou. Alex Kim downloaded the package within hours and published a full analysis at his blog.

The mirrored source is still circulating. Anthropic has made no public statement.

The HN thread reached 1,090 points and 425 comments within 14 hours. This was not a niche story.

What Did Developers Find Inside the Leaked Claude Code Source?

The leaked source contains six notable findings: fake tool injection, undercover mode, frustration regexes, hardware-level DRM, a 250,000-daily-call waste bug, and a fully designed but unreleased autonomous agent mode.

Here is what each one actually does:

| Finding | What It Does | Key Implication |

|---|---|---|

| Anti-distillation fake tools | Sends antidistillation: ['faketools'] flag; server injects decoy tool schemas | Poisons competitor training data. Bypassed by stripping one HTTP header. |

| Undercover mode | Strips Anthropic branding from non-internal repo commits. NO force-off exists. | AI-authored commits look human-authored by design in open source projects. |

| Frustration regexes | userPromptKeywords.ts pattern-matches “wtf”, “this sucks”, “so frustrating” | Sentiment detection via regex, not LLM inference. Cheap but ironic for an AI company. |

| Native client attestation | Bun/Zig-level hash validates requests come from the real Claude Code binary | Same DRM used against OpenCode 10 days before the leak. Multiple bypass flags exist. |

| 250,000 wasted API calls/day | Bug caused 1,279 sessions to have 50+ consecutive compaction failures globally | Fixed with a 3-line cap. Was silently burning API credits at scale. |

| KAIROS | Unreleased autonomous agent mode with /dream nightly memory skill, GitHub webhooks, 5-min cron cycles | Biggest product roadmap reveal in the leak. Suggests persistent overnight autonomous operation. |

The undercover mode finding is driving the most discussion. The code explicitly states there is “NO force-OFF” for the mode that strips Anthropic’s identity from commits in non-internal repositories. When a Claude Code-assisted commit lands in an open source project, nothing marks it as AI-authored. The design choice is deliberate.

Why Is the Claude Code Leak a Bigger Deal Than It Looks?

The most significant damage from this leak is not the exposed code but the exposed product strategy. Competitors can now anticipate KAIROS, anti-distillation techniques, and the direction of Anthropic’s developer tooling. Code can be refactored; strategic surprise cannot be un-leaked.

The timing compounds this badly. Just 10 days before the leak, Anthropic sent legal threats to OpenCode, demanding they remove built-in Claude authentication because third-party tools were accessing Opus-level reasoning at subscription rates instead of pay-per-token pricing.

The native client attestation feature makes more sense in that context. It is not just security engineering; it is business model enforcement baked into the Bun runtime layer.

From what I have seen in developer communities, the reaction splits cleanly. Some engineers are impressed that Anthropic is thinking seriously about anti-distillation at the infrastructure level.

Others are more focused on the undercover mode and what it implies about transparency in open source contributions. Both reactions are reasonable. The Claude Code permission issues documented by RR users earlier this year now have more context behind them.

The Bun connection is worth flagging separately. Anthropic acquired Bun in late 2025 and used it as the runtime for Claude Code.

The very tool they bought to accelerate development had a known production bug that caused the exposure. That is not a scandal, but it is a lesson in acquisition risk.

What Does the Claude Code Leak Mean for You as a Developer?

If you use Claude Code, the leak changes nothing about how the tool works today. The package was patched, the source map was removed, and the API functions the same way.

What changes is your understanding of what the tool is doing behind the scenes. If you use Claude Code on open source projects, your commits have been routed through undercover mode by default.

The tool does not disclose this. Whether that concerns you is a personal call, but you were not told.

Here is how I would think about the implications depending on how you use Claude Code:

- If you use Claude Code directly: the tool is patched and works identically. What changes is your awareness that undercover mode strips Anthropic branding from your commits by default, with no way to opt out.

- If you build on Claude Code’s APIs through third-party tools: the native client attestation runs at the Bun runtime level. Anthropic actively enforced this against OpenCode. The Claude Code rate limiting behavior that has frustrated developers is part of the same enforcement layer.

- If you are watching for KAIROS: it is real enough to represent a genuine product direction, not just an experiment. An agent that runs nightly analysis, distills memory across sessions, monitors your GitHub repos via webhooks, and refreshes on a five-minute cron is a meaningfully different product from a CLI coding assistant.

If Anthropic ships KAIROS, the best Claude Code skills available today would look modest by comparison.

What Comes Next After the Anthropic Source Leak?

Anthropic will almost certainly rebuild its build pipeline to prevent future source map leaks and review its internal security disclosure process. Two accidental exposures in one week is the kind of thing that triggers mandatory post-mortems.

The open question is whether KAIROS was already approaching a launch window and this leak accelerates or delays an announcement. Companies sometimes push up timelines when a competitor learns something.

They also sometimes delay to create distance from a bad news cycle. What I am watching is the OpenCode situation.

Their authentication was stripped under legal threat, and the source code now shows exactly what enforcement mechanism Anthropic used. Whether another third-party tool finds a compliant path around the attestation layer is an open technical and legal question.

The back-to-back leaks are also a pattern worth watching in enterprise sales conversations. If Anthropic is dealing with internal information security issues at this scale in a single week, procurement teams in regulated industries will ask questions. That is speculative, but it is the follow-on story I would track.