What Happened: The National Republican Senatorial Committee released an AI-generated video of a Democratic Senate candidate in March 2026, combining his real past tweets with fabricated commentary. It was one of at least five confirmed deepfake incidents across the 2026 midterms, and no federal law bans any of them.

You watched a senator read his own tweets back to you. He looked right at the camera. He commented on his own past statements.

He even laughed at one of them. The problem is he never recorded that video. A campaign committee made it for him, using AI.

This is not a hypothetical warning about where things are heading. It already happened, in March 2026, in a Senate race in Texas. And it was not an isolated incident.

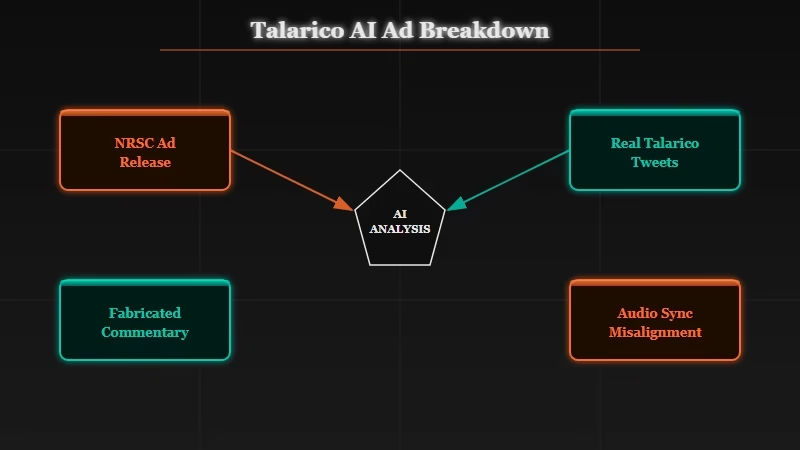

Breaking Down the Talarico Ad

The 2026 Talarico deepfake mixed real tweet quotes with completely fabricated commentary, creating a hybrid attack that even a forensics expert found nearly impossible to detect.

The National Republican Senatorial Committee released an 85-second attack ad targeting James Talarico, the Democratic nominee for Texas Senate. The ad showed a digital version of Talarico appearing to read his old tweets directly into the camera, in a blazer, in a professional setting.

Some of the content was real. Talarico did make those tweets, years earlier. The NRSC found statements he had made about white radicalism, transgender children, and his theological views, and used them as the raw material.

What was invented was the commentary layered on top. The synthetic Talarico added remarks like “oh, I love this one too” while reviewing his own posts. He never said those words, and the entire reaction was generated.

Hany Farid, a professor at UC Berkeley who specializes in digital forensics, examined the video after its release. His assessment was direct: “The face and voice are very good.

There is a slight misalignment between audio and video, but otherwise this is hyper-realistic and I don’t think that most people would immediately know it is fake.”

One expert looking specifically for flaws could find one small audio sync issue. That was the only tell.

Five Confirmed Cases, Three States, Both Parties

At least five confirmed deepfake incidents appeared in the 2026 midterms across Texas, Georgia, and Massachusetts, deployed by Republican campaign organizations in primary and general election races alike.

The Talarico ad was the most prominent case, but it was not the only one.

In Georgia, Representative Mike Collins released a deepfake of Senator Jon Ossoff depicting him saying “I just voted to keep the government shutdown,” a statement Ossoff never made. Collins’s campaign defended it as “satire” and called it “the future of digital campaigning.”

In Texas, Senator John Cornyn released an AI-generated music video depicting his Republican primary rival Ken Paxton committing corruption and infidelity, set to a parody of the B-52s’ “Love Shack.” In January, Cornyn also posted a deepfake of Representative Wesley Hunt with no AI disclosure label at all. Specialized detection tools flagged it at a 99% probability of being AI-generated.

In Massachusetts, Republican primary candidate Brian Shortsleeve deployed voice cloning technology in a radio advertisement featuring a synthetic recreation of Governor Maura Healey’s voice making statements she never made. Radio is harder to verify than video: there is no face to study, no lip sync to check.

| Incident | Target | Creator | Type | Disclosed? |

|---|---|---|---|---|

| Texas Senate ad | James Talarico (D) | NRSC | AI face and voice video | Yes, fine print |

| Georgia Senate ad | Jon Ossoff (D) | Collins campaign | AI video | Yes, labeled “satire” |

| Texas primary music video | Ken Paxton (R) | Cornyn campaign | AI animation | Yes |

| Massachusetts radio ad | Maura Healey (D) | Shortsleeve campaign | Voice clone, audio only | Unclear |

| Texas attack video | Wesley Hunt (R) | Cornyn campaign | AI face and voice video | No |

The common thread is not a party. It is a decision that synthetic media is an acceptable tool for political campaigns. And the technology has gotten good enough that most voters cannot tell the difference.

The same AI video platforms driving creative content online have become accessible enough for political campaigns to deploy at scale. What used to require a film studio now requires a subscription and a prompt.

From what I have seen covering AI tools, the speed at which consumer-grade video generation has improved in the last 18 months is genuinely startling. This was not a 2025 problem slowly inherited by 2026. It became a 2026 problem overnight.

The Law Does Not Cover This

There is no federal law banning political deepfakes, and the 28 states with deepfake legislation focus on disclosure requirements rather than prohibition.

The TAKE IT DOWN Act, signed in May 2025, does criminalize AI-generated synthetic intimate imagery. It is a meaningful law for a specific problem. It does not touch political content.

At the state level, 28 states have passed some form of deepfake legislation. These laws emphasize disclosure, not prohibition. They require campaigns to label AI-generated content, not to avoid using it.

As the Wesley Hunt case in Texas demonstrated, even that requirement is not consistently enforced. A campaign posted an unlabeled deepfake of a sitting congressman and faced no legal consequence.

The disclosure rule has a deeper problem even when followed. A label in small text in the bottom corner of a video does not prevent the video from being clipped and reshared without context. It does not prevent a viewer from stopping after the first 30 seconds. The image sticks in memory after the disclaimer has scrolled past.

This is not a regulatory gap that is about to close. Congress has struggled for years to pass any meaningful technology legislation, and the political incentives to regulate campaign advertising are complicated when both parties stand to benefit from the same tools.

We have written before about how AI capability claims tend to be more unsettling than the headlines suggest. Political deepfakes are a case where the capability arrived before anyone was ready to govern it.

Half of Voters Say Deepfakes Already Changed Their Thinking

Survey data from the 2026 cycle found that nearly 50% of voters say deepfakes had some influence on their election decisions, even though most claim to distrust the technology.

The mechanism is not that voters believe deepfakes are real. It is subtler than that.

A synthetic video can plant a doubt. It can make a real quote feel more damaging because you have now seen the person “say it.” It can shift emotional temperature even when the factual content is already in the public record.

This matters because the defense most often offered for political deepfakes is that the underlying statements were authentic. The NRSC made this argument directly: Talarico said those things, and the deepfake just presented them dramatically. But the invented commentary, the facial expressions, the professional staging: all of it was fabricated. The format manipulates even when the facts are technically real.

The broader issue for AI development is one we have been watching closely, including in our coverage of AI safety decisions inside major labs. The tools improve faster than the frameworks for governing them, and the harm tends to become visible before the rules catch up.

How to Protect Yourself From Political Deepfakes

No consumer detection tool will reliably catch every political deepfake today, but four practical checks reduce your exposure significantly.

Detection tools exist, but they are not widely available to voters. GetReal Labs, founded by Hany Farid, offers professional-grade deepfake detection. Google’s Gemini and Hive Moderation both flagged the Wesley Hunt video at high confidence.

None of these are tools the average person reaches for before sharing a political video. Here is what practical verification looks like instead:

- Check the source first, not the content. If a video comes from a campaign account, treat it as campaign advertising. An AI-generated video from the opposing party is still campaign messaging, regardless of its technical origin.

- Find the disclosure label and read it carefully. Disclosure is now legally required in some states. If a label is present, the content is synthetic by definition. An absent label does not mean the video is authentic.

- Verify quotes independently before reacting. The most effective deepfakes mix real statements with fabricated framing. A quote being accurate does not mean the video presenting it is honest.

- Watch the audio sync closely. A slight mismatch between mouth movement and speech, even a fraction of a second, is still the most common detectable flaw in AI video.

Example: You scroll past a video on X of a senator making a shocking statement. The account posting it is a campaign PAC. Before sharing: search the exact quote in quotation marks. If no major outlet has reported it from an original source, pause the video at the 10-second mark and compare lip movement against the audio track. If it feels slightly off, it probably is.

According to researchers at UC Berkeley’s Farid Lab, even trained forensic analysts struggled to reach 100% detection accuracy on synthetic video with no visible watermark. The average voter is starting from a much harder baseline.

The Verdict

The 2026 midterms have established political deepfakes as standard campaign practice, with no federal law, limited state enforcement, and detection technology unavailable to most voters.

Political deepfakes are not a warning about the future of elections. They are the current reality.

Campaigns have decided synthetic media is a legitimate attack tool. The legal system has not caught up. Detection requires expertise most voters do not have.

The Talarico ad had one detectable flaw: a subtle audio sync issue that an expert had to study closely to find. The next version will not have that flaw. The trajectory is toward perfect synthetic political content at campaign-ad prices.

The honest answer to “can you trust what you see in a political ad” is no, with no near-term path back to yes.

In my view, this is not a partisan issue and should not be treated as one. The campaigns deploying deepfakes today may change next cycle. The technology will not.