My Take: Jensen Huang’s AGI claim is technically defensible and substantively misleading at the same time. He accepted a definition of AGI designed to make the claim true, and the critics who mock him are also missing the actual story. The real issue is that the people with the most money riding on AI progress now control what “AGI” means. That is more unsettling than any capability claim.

On March 23, Jensen Huang told Lex Fridman something that lit Reddit on fire: “I think we’ve achieved AGI.” Within 24 hours, the tech press had lined up to explain why he was wrong.

TechRadar ran the headline “Sorry Jensen Huang, we haven’t ‘achieved AGI.'” Researchers from MIT, Stanford, and DeepMind went on record saying current AI still hallucinates, struggles with novel reasoning, and lacks anything like genuine cross-domain understanding.

They are right. And they are arguing about the wrong thing entirely.

What actually happened on that podcast is more interesting than a wrong definition, and more troubling than a CEO saying something self-serving for chip sales. The AGI goalposts have been moved so many times now by so many commercially interested parties that the word itself has become a liability. Huang’s comment is just the most visible symptom.

The Mainstream View (And Why It Falls Short)

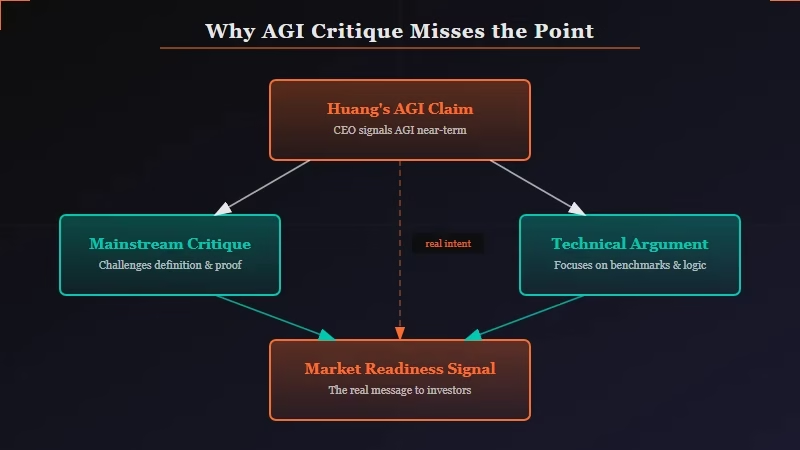

The mainstream critique of Huang’s AGI claim is correct on the facts but misses why the claim matters.

The definition Fridman offered: could AI start and grow a tech company worth $1 billion. It is absurdly narrow. Huang accepted it immediately. He then narrowed it further by specifying the company didn’t need to remain valuable.

“You said a billion, and you didn’t say forever,” Huang told Fridman, describing a theoretical Claude model spinning up a viral app, reaching a billion-dollar moment briefly, and then going out of business.

That scenario is not general intelligence. That is a very good autocomplete engine running at scale.

TechRadar, the AI research community, and most of the serious voices in this debate are correct: AGI by any academically recognized definition requires human-level performance across all cognitive tasks, including the ability to learn genuinely new domains, reason under true uncertainty, and transfer knowledge in ways current models demonstrably cannot. Current large language models still fail on tasks a six-year-old handles without effort.

The mainstream critique nails the technical reality. What it misses is that Huang was never making a technical argument. He was doing something else.

What’s Actually Happening

Huang’s AGI claim is a commercial positioning move disguised as a technical milestone, and the pattern across the industry is what should be alarming.

From what I’ve seen watching this space, no major AI CEO now uses AGI to mean what the term meant when researchers invented it. Sam Altman has defined AGI as “a system that can do the vast majority of cognitive work,” which conveniently matches what GPT-4-level models were already doing.

OpenAI has a clause in its investment agreements that triggers special provisions when AGI is “achieved,” with a definition that OpenAI’s own board controls. Anthropic’s internal documents, including materials from the Claude Mythos leak earlier this month, suggest even they use capability thresholds that are commercially defined rather than academically rigorous.

Jensen Huang accepted Lex Fridman’s billion-dollar-company definition because it was the easiest definition to say yes to. NVIDIA sells the chips that power every model that might eventually start that billion-dollar company.

The closer AGI appears, the more urgency around GPU procurement. The incentive to declare the milestone near or achieved is direct and enormous.

The way I see it, Huang’s statement is best read not as a technical claim but as a market signal. When the CEO of the company that supplies the infrastructure for AI says we’ve achieved AGI, that translates directly into enterprise budget conversations. “Even NVIDIA’s CEO thinks we’re at AGI. What’s your compute budget for next year?”

The critics who focused on whether today’s models truly satisfy the requirements of general intelligence were answering a different question than the one being asked. Huang wasn’t making a claim about capabilities. He was making a claim about the narrative.

This disconnect matters because it shapes how AI gets funded, deployed, and regulated. We have already seen how the gap between AGI hype and actual performance plays out in practice: AI agents are not working for regular users the way the headlines suggest, and the people buying the narrative are the ones who never had to try the product.

The Part Nobody Wants to Admit

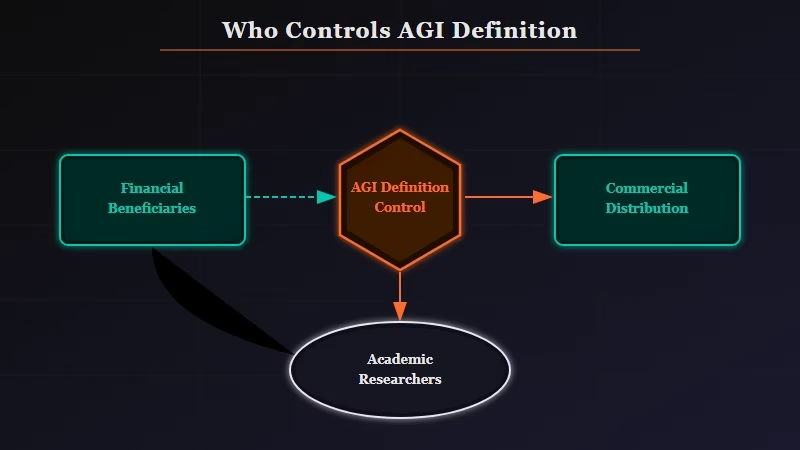

The definition of AGI is now controlled entirely by people who financially benefit from declaring it achieved as soon as possible, and that is a structural problem the research community is not equipped to solve.

This is the uncomfortable implication the mainstream coverage consistently buries. The debate is framed as “is Huang right or wrong about AGI?” The real question is who gets to answer that. Right now, the answer is: the companies selling chips, running the cloud services, and signing enterprise contracts.

Academic researchers at DeepMind can publish papers arguing AGI requires cross-domain autonomous learning and nobody changes their procurement plan. When Jensen Huang says it’s here on Lex Fridman, enterprise CIOs start updating slide decks. The commercial definition is winning because it has distribution.

What I find troubling here is not that Huang is lying. He almost certainly believes his narrowed definition is reasonable. It is that the people with the most coherent, financially uncorrupted view of what AGI actually means (the researchers) have no mechanism to enforce that definition against the people who control the narrative.

The AI agents winning in practice today are impressive and genuinely useful. They are not general intelligence. But the label is being applied anyway, and the label shapes investment, policy, and public expectation.

Huang also undermined himself in the same breath. He told Fridman: “The odds of 100,000 of those agents building NVIDIA is zero percent.” You cannot declare AGI achieved and in the same answer admit the AI could not replicate what you built. Those two statements are incompatible. Nobody pressed him on it.

Hot Take

The most honest thing Jensen Huang did was accept Lex Fridman’s definition instead of arguing for his own. That acceptance tells you everything: there is no definition of AGI he would push back on if saying yes served him commercially. The critics are spending energy proving he is wrong about capabilities. They should be arguing about who gets to define the benchmark. Right now, the answer is whoever sells the most chips.