Quick Answer: The AI agent hype has a serious gap: builders want autonomous systems that navigate the web, book meetings, and chain fifty API calls. Normal users want a button that does one thing and works every time. Most AI agent projects are solving the wrong problem for the wrong audience, and the data from 2026 production deployments backs this up.

Someone posted in r/AgentsOfAI last week about showing their AI agent to a friend who works at a bank.

The friend watched the demo, nodded, and said: “So it’s like a macro that sometimes works?” The builder was deflated. The banker was right.

That sentence describes the AI agent market in 2026 better than anything I’ve read in a press release. We have thousands of builders shipping increasingly sophisticated agent systems that chain tool calls, browse the web, draft emails, and execute multi-step workflows without human intervention.

And we have normal users who, when given access to those systems, mostly want to know where the button is.

The debate in AI builder communities right now is about making agents more capable. That is the wrong debate.

The real problem is that the people building agents have a fundamentally different relationship to technology than the people they’re trying to reach.

The Mainstream View (And Why It Falls Short)

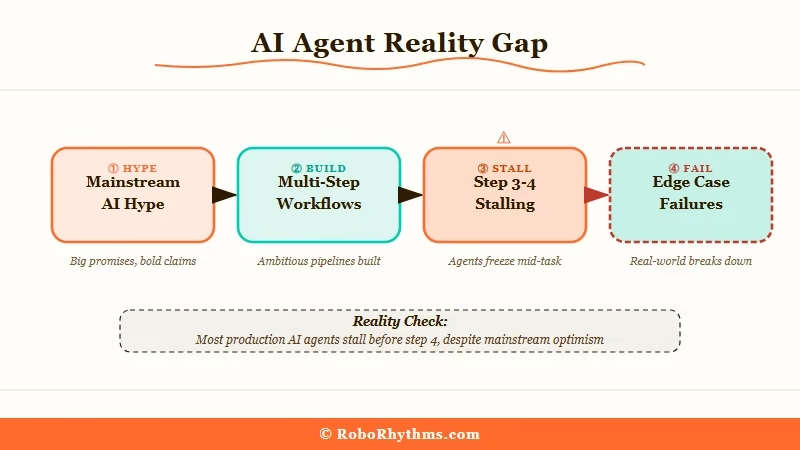

The mainstream view on AI agents is that autonomous, multi-step AI workflows will transform knowledge work across every industry, replacing manual processes and making sophisticated automation accessible to non-technical users.

Microsoft CEO Satya Nadella has been the most prominent voice here. At the 2025 Build conference, he framed it directly: “Agents are the new applications.”

The argument is that instead of users opening software and navigating menus, they will simply describe what they want and an AI agent will handle the rest, booking flights, analyzing reports, managing projects, orchestrating across tools they already use.

Publications from Wired to TechCrunch have published version after version of this argument since 2024. The future of work is agentic.

The solopreneur with an agent army beats the traditional employee ten to one. The agent economy will democratize tasks that previously required teams.

There are two problems with this view. The first is empirical: it’s not what’s happening in practice. The second is conceptual: it misunderstands why people use software.

People don’t use software because it is sophisticated. They use it because it is reliable. The workflow that gets adopted is the one that does the same thing every time, not the one with the highest theoretical ceiling.

Every “magical” demo that requires narration and a perfect setup is a demo that cannot be handed to a non-technical user.

According to Gartner’s 2026 AI Hype Cycle research, autonomous AI agents are at or near peak inflated expectations, precisely the stage where the gap between marketing and production reality is widest.

What’s Actually Happening

What’s actually happening with AI agent adoption is that the narrow, constrained implementations are succeeding and the ambitious, autonomous ones are either dying quietly or stuck in demo mode.

The implementations that work in production have something in common: they do not require the user to trust the agent with ambiguous decisions. They are scripted on top of AI, not autonomous because of AI.

A Zendesk integration that routes tickets based on sentiment analysis. A sales pipeline tool that drafts follow-up emails for human review. A document parser that extracts specific fields and flags exceptions for a human to resolve. These are working.

Here is the reality check no one in the agent space wants to put in a blog post:

| What agents are supposed to do | What happens in most production deployments |

|---|---|

| Autonomously execute multi-step tasks | Stall or error at step 3-4, requiring human fix |

| Replace routine human workflows | Create a new workflow: managing and debugging the agent |

| Scale without human oversight | Hallucinate past a critical decision point |

| Let non-technical users build automation | Non-technical users cannot debug when it breaks |

| Handle edge cases intelligently | Confidently handle edge cases incorrectly |

The pattern that is actually winning is not “replace the human.” It is “extend the human.” The agent handles the part of the workflow that is high-volume and low-stakes.

The human handles everything else. The boundary between those two categories is where most agent projects underestimate the complexity.

What I have seen work well: agents that operate on clearly defined data schemas with human review built into the loop.

What I have seen fail: agents that are given access to live systems and expected to reason about novel situations without a fallback path.

The Part Nobody Wants to Admit

The part nobody wants to admit is that the people who are most excited about AI agents are also the people least representative of the users they’re building for.

AI builders are disproportionately comfortable with systems that sometimes fail. They debug things. They can interpret an error message, rerun a step, adjust a prompt, and try again.

When an agent behaves unexpectedly, they find it interesting rather than threatening. They are willing to invest hours getting a workflow dialed in because they can see the payoff.

Normal users are none of those things. When something fails unexpectedly, they close the app and never return.

They need the thing to work the first time, the second time, and the hundredth time, with no manual intervention and no debugging. The bar for adoption is not “impressive.” It is “never breaks.”

The result is a market where builders are optimizing for capability, more tools, longer chains, more autonomous decision-making, and users are evaluating on reliability.

Those two criteria reward completely different architectures. You cannot maximize both simultaneously, and the builders keep choosing the wrong one to prioritize.

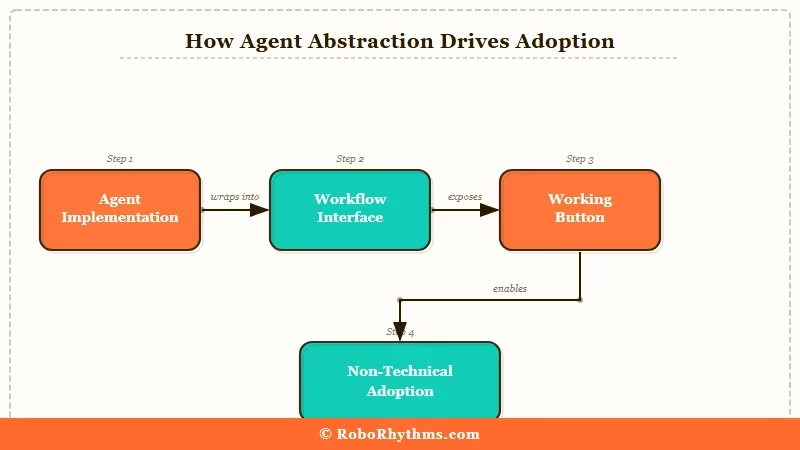

The developers who are actually getting traction with non-technical users have made peace with something uncomfortable: the agent is not the product. The workflow is the product.

The agent is an implementation detail that the user should never have to think about.

Hot Take

Every AI agent startup that positions itself as “autonomous” is building for a market of other developers, not for the actual users they pitch to investors. The business model is: raise money from people who understand what the agent does, then try to figure out how to get people who don’t understand it to pay for it. That gap never closes. The products that survive are the ones that hide the agent entirely behind a UX that just looks like a button. The agent revolution will happen, but users will never know they’re using one. The builders who accept this now are the ones who will still have companies in two years.

Frequently Asked Questions

Why do AI agents keep failing with regular users?

AI agents fail with regular users because they require tolerance for unpredictability, which regular users don’t have. When something breaks, non-technical users exit rather than debug. Successful agent products hide the agent behind a reliable interface, the user never sees the AI, just the output.

Are AI agents actually useful in 2026?

Yes, in narrow, constrained contexts. Agents that operate on defined data schemas, handle high-volume repetitive tasks, and include human-in-the-loop checkpoints for edge cases work reliably. Fully autonomous agents handling complex, ambiguous workflows remain brittle in production.

What is the difference between an AI agent and regular automation?

Regular automation follows fixed rules and breaks predictably when rules aren’t met. AI agents can reason about novel situations, but this flexibility introduces the risk of confidently wrong decisions. The trade-off between reliability (automation) and flexibility (agents) is not resolved by more capable models, it’s a design choice.

Why are so many AI agent startups failing?

Most AI agent startups are optimizing for capability, longer autonomous chains, more tools, more autonomy. The users they’re targeting optimize for reliability. Those are incompatible priorities without deliberate product design that constrains the agent and surfaces its failures gracefully.

What kinds of AI agents actually work in production?

Agents succeed when they handle high-volume, low-stakes steps in an existing workflow, operate on clearly defined data, and pass anything ambiguous to a human. The implementations that fail are those requiring the agent to make judgment calls on live systems without a reliable fallback path.

Will AI agents replace knowledge workers?

Not in the way the current hype suggests. What is more likely is that agents handle the repetitive, well-structured portions of knowledge work while humans take on more of the judgment-intensive, ambiguous, and interpersonal parts. That is an extension of what has happened with every wave of automation since the 1990s.